Update: Dumping Entra Connect Sync Credentials

Recently, Microsoft changed the way the Entra Connect Connect Sync agent authenticates to Entra ID. These changes affect attacker tradecraft, as we can no longer export the sync account credentials; however, attackers can still take advantage of an Entra Connect sync account compromise and gain new opportunities that arise from the changes.

How It Used To Work

Prior to the change, an “AAD Connector” account would be created upon Entra Connect sync install. Upon creation, a randomized password would be generated and set for the connector account. The AAD Connector account was a user principal that would be assigned a special sync role, and it would authenticate just like any old user. You may have seen these before; they look like this:

In this instance, ENTRACONNECT is the hostname on which the agent is running. There are a wide variety of attack paths that can stem from compromising this account, so it is a very advantageous target for attackers.

Old Attacker Tradecraft

Thanks to AADInternals, it was simple to obtain the sync password of the AAD Connector Account used to import and export data from Entra ID. Some decryption steps are documented here, but that mostly focuses on the on-premises accounts. If you are an AADInternals user, you would need to impersonate the context of the Entra Connect sync account and run the command:

Get-AADIntSyncCredentials

And that’s it! You could use your creds to do all sorts of sync mischief. Under the hood, the ADSync service account would connect to a SQL database where it would obtain a key to decrypt an “AAD configuration” blob. The plaintext password of the AAD Connector Account (Connects to Entra ID) would be in that blob. If an attacker got privileged access to a host running Entra Connect Sync, they could obtain this plaintext password and authenticate off-host, conditional access policies (CAPs) permitting. The theft of such a credential would have a huge impact on any organization, so I presume that Microsoft moved over to an application registration to reduce such a risk.

The Client Credentials Flow

If you are new to Entra ID, you can read how the Client Credentials flow works here. In a nutshell, an application registration can authenticate as itself utilizing the app roles assigned to it. To authenticate and obtain access tokens, it needs credentials provisioned to it. These credential types aren’t exclusive, and an application can have multiple. They can be in the form of:

- Secrets (plaintext password)

- Certificates

- Federated Credentials

If the application uses a certificate, it will sign an attestation when authenticating to obtain an access token. Here is an example:

POST /{tenant}/oauth2/v2.0/token HTTP/1.1 // Line breaks for clarity

Host: login.microsoftonline.com:443

Content-Type: application/x-www-form-urlencoded

scope=https%3A%2F%2Fgraph.microsoft.com%2F.default

&client_id=11112222-bbbb-3333-cccc-4444dddd5555

&client_assertion_type=urn%3Aietf%3Aparams%3Aoauth%3Aclient-assertion-type%3Ajwt-bearer

&client_assertion=eyJhbGciOiJSUzI1NiIsIng1dCI6Imd4OHRHeXN5amNScUtqRlBuZDdSRnd2d1pJMCJ9.eyJ{a lot of characters here}M8U3bSUKKJDEg

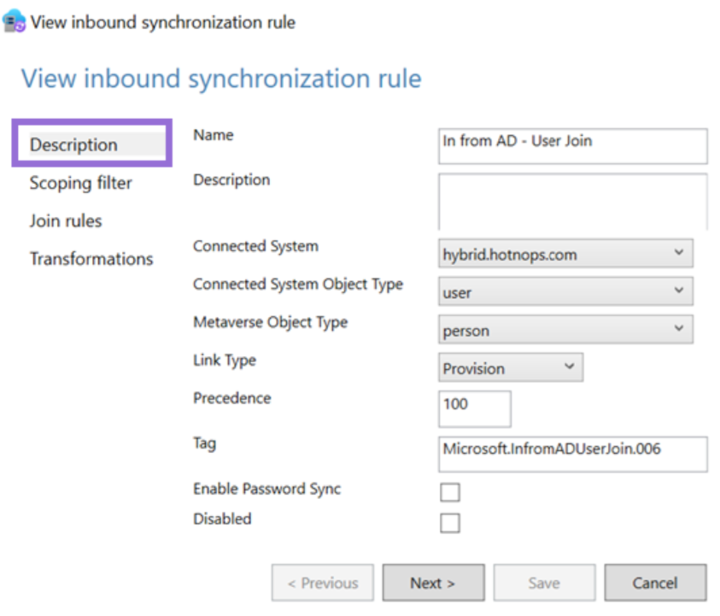

&grant_type=client_credentialsHow It Works Now

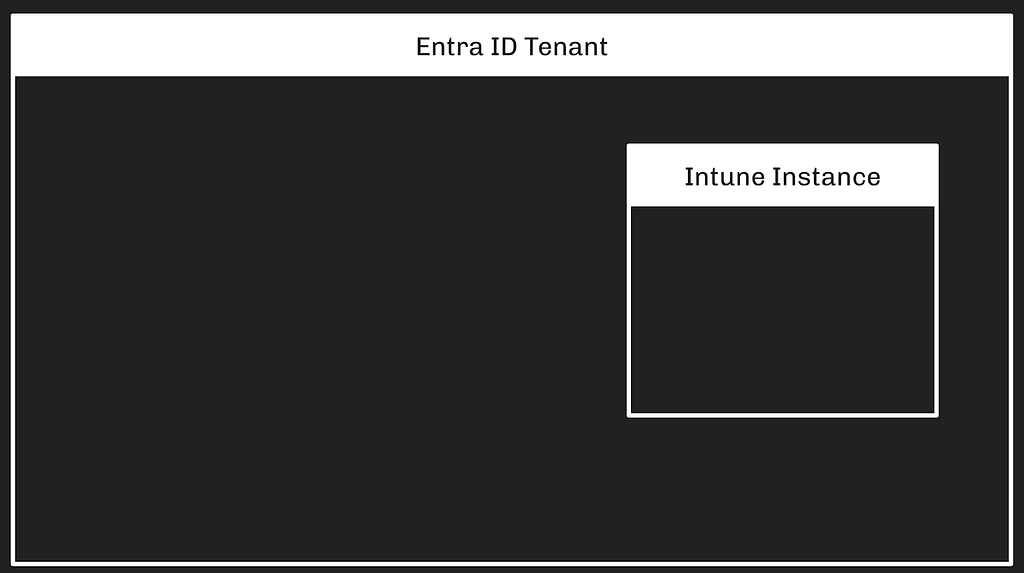

The new Entra Connect Sync agent moved from a “user” centric authentication mechanism to an app registration, which uses the client credentials flow. Since app registrations support certificate authentication, a self-signed certificate is generated on install and saved in the NGC Crypto Provider store. The installer will use the login information you provided (which must be a Global Administrator or Hybrid Identity Administrator) to create a new application registration with the self-signed certificate as an authentication certificate. Once Entra Connect sync completes installation, an application will exist in Entra ID that looks like this:

And the configured app roles:

New Tradecraft

In a perfect world, an attacker could no longer dump plaintext credentials (because there are none) and the private key that corresponds to the certificate is sitting on a TPM. It would appear that any AD Connector account abuses must be performed on-host from here on out, forcing an attacker to persist on a Tier Zero asset. If there is no TPM support, we may be able to export the certificate private key, but I don’t want to rely on that. To the red teamer, it may seem all is lost–but fret not; there is still hope.

After examining the .NET assemblies provided in the new release, it appeared that a graph token of a Global Administrator or Hybrid Identity Administrator was not required to add a new key to the application registration.

This came off as strange because the application was not provisioned with either Application.ReadWrite.All or Application.ReadWrite.OwnedBy. Let’s take a look at the decompiled code in Microsoft.Azure.ActiveDirectory.AdsyncManagement.Server:

if (!string.IsNullOrEmpty(graphToken))

{

httpClient.DefaultRequestHeaders.Authorization = new AuthenticationHeaderValue("Bearer", graphToken);

string text2;

if (!ServicePrincipalHelper.CheckUserRole(azureInstanceName, httpClient, out text2))

{

Tracer.TraceError(text2, Array.Empty<object>());

throw new AccessDeniedException(text2);

}

}

else

{

azureAuthenticationProvider = AzureAuthenticationProviderFactory.CreateAzureAuthenticationProvider(aadCredential.UserName, aadCredential.Password, InteractionMode.Desktop);

string text4;

string text3 = azureAuthenticationProvider.AcquireServiceToken(AzureService.MSGraph, out text4, false);

if (string.IsNullOrEmpty(text3))

{

Tracer.TraceError("ServicePrincipalHelper: Failed to acquire an access token for graph. {0}", new object[]

{

text4

});

throw new AccessDeniedException(text4);

}

httpClient.DefaultRequestHeaders.Authorization = new AuthenticationHeaderValue("Bearer", text3);

azureInstanceName = azureAuthenticationProvider.AzureInstanceName;

}

That whole else block is handling the case for when a graph token (presumably that of a Global Administrator or Hybrid Identity Administrator) is not provided. How interesting!

The aadCredential username and password is a bit misleading, as it’s actually holding the UUID of the application registration and the sha256 hash of the existing certificate, as this function call shows:

public void UpdateADSyncApplicationKey(string graphToken, string azureInstanceName, string newCertificateSHA256Hash, AADConnectorCredential currentCredential)

{

Tracer.TraceVerbose("Enter UpdateADSyncApplicationKey", Array.Empty<object>());

ServicePrincipalHelper.UpdateADSyncApplicationKey(this.syncEngineHandle.GetAzureActiveDirectoryCredential(ADSyncManagementService.DefaultAadConnectorGuid), graphToken, azureInstanceName, newCertificateSHA256Hash, currentCredential);

}

So what we need is the cert hash of the existing certificate credential and the ability to load it into our AzureAuthenticationProviderFactory. Once we do, we can use that certificate to do two things:

- Obtain a graph token to make the addKey API call

- Obtain a proof of possession (POP) assertion proving that we are currently in possession of the private key

Further down in the function, the following code executes if no graph token is provided:

string proof = azureAuthenticationProvider.GenerateProofOfPossessionToken(applicationByAppId.id);

Guid guid2 = ServicePrincipalHelper.AddApplicationKey(graphApplication, guid, proof, x509Certificate);

The graphApplication already has an HTTPClient with a Bearer token set:

private static Guid AddApplicationKey(GraphApplication graphApplication, Guid applicationId, string proof, X509Certificate2 cert)

{

KeyCredentialModel keyCredential = new KeyCredentialModel

{

Type = "AsymmetricX509Cert",

Key = cert.GetRawCertData(),

Usage = "Verify",

StartDateTime = cert.NotBefore.ToUniversalTime(),

EndDateTime = cert.NotAfter.ToUniversalTime(),

DisplayName = "CN=Entra Connect Sync Provisioning"

};

return graphApplication.AddKey(applicationId, keyCredential, proof).KeyId.Value;

}

public KeyCredentialModel AddKey(Guid appId, KeyCredentialModel keyCredential, string proof)

{

if (appId == Guid.Empty)

{

throw new ArgumentException("appId");

}

if (keyCredential == null)

{

throw new ArgumentNullException("keyCredential");

}

if (string.IsNullOrEmpty(proof))

{

throw new ArgumentNullException("proof");

}

string requestUri = string.Format(this.graphEndpoint + "/v1.0/applications(appId='{0}')/addKey", appId);

string passwordCredential = null;

string content = JsonConvert.SerializeObject(new

{

keyCredential,

proof,

passwordCredential

}, ODataResponse.JsonSettings.Value);

KeyCredentialModel result;

using (HttpRequestMessage httpRequestMessage = new HttpRequestMessage(HttpMethod.Post, requestUri)

{

Content = new StringContent(content, Encoding.UTF8, "application/json")

})

{

using (HttpResponseMessage httpResponseMessage = base.SendRequest(httpRequestMessage))

{

result = JsonConvert.DeserializeObject<KeyCredentialModel>(httpResponseMessage.Content.ReadAsStringAsync().GetAwaiter().GetResult());

}

}

return result;

}

We now know what is needed to add a new key. As an attacker, we can generate a new private key, build a certificate, obtain a POP token, and register it with the application registration. This provides us persistent, off-host, access to the application registration. To do this, we can build out a .NET assembly that performs the necessary steps in the context of the ADSync account.

Proof of Concept

Our goal is to prove that we can still persist our access to a compromised AAD connector account, even if a TPM protects the private key. We can accomplish this by generating our own certificate and adding it to the service principal.

First, we need to obtain an access token and a signed POP assertion. We can do this with the certificate that is installed on the host and can be performed by running this program here:

Our graph token looks like this:

And the POP assertion looks like this:

According to the documentation here, this should be enough to add credentials to our application registration, given that we have at least Application.ReadWrite.OwnedBy.

However, our application does not have any required app roles!

How can this be? Well, if you are an astute reader, or simply have an attention span past the first paragraph of Graph documentation, you’ll see this banger on the addKeys page:

As it turns out, if you have access to an existing key, you can just add your own with no permissions needed!

How have I missed this?!

Mystery solved, and our path is clear for how we can persist our access to the AAD connector account off-host.

If we run our AddKey binary (posted here) with just our access token and POP assertion, you can see that we successfully added our key.

And the updated key is reflected here:

Red team crisis averted; we can keep our sync tradecraft, albeit a bit more “detectable”. Also, as a general takeaway, the ability to sign POP assertions equals the ability for any application to add new certificates to itself, which is pretty cool.

New Opportunities

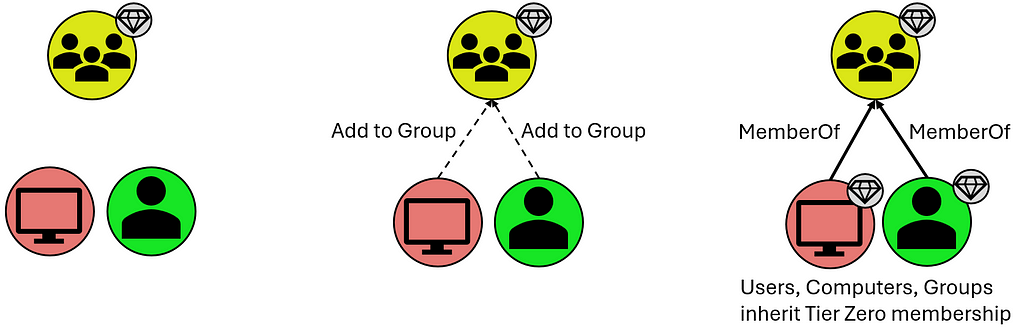

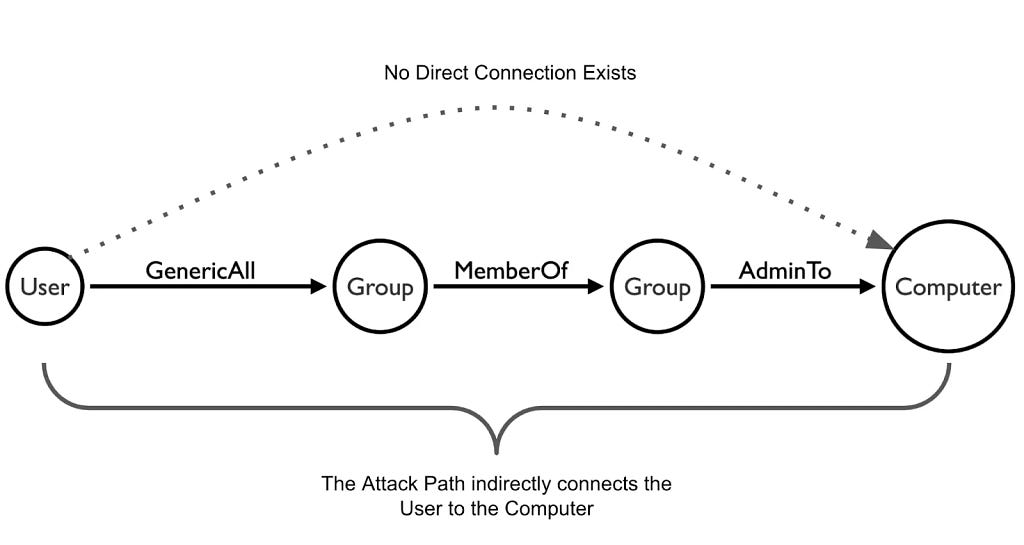

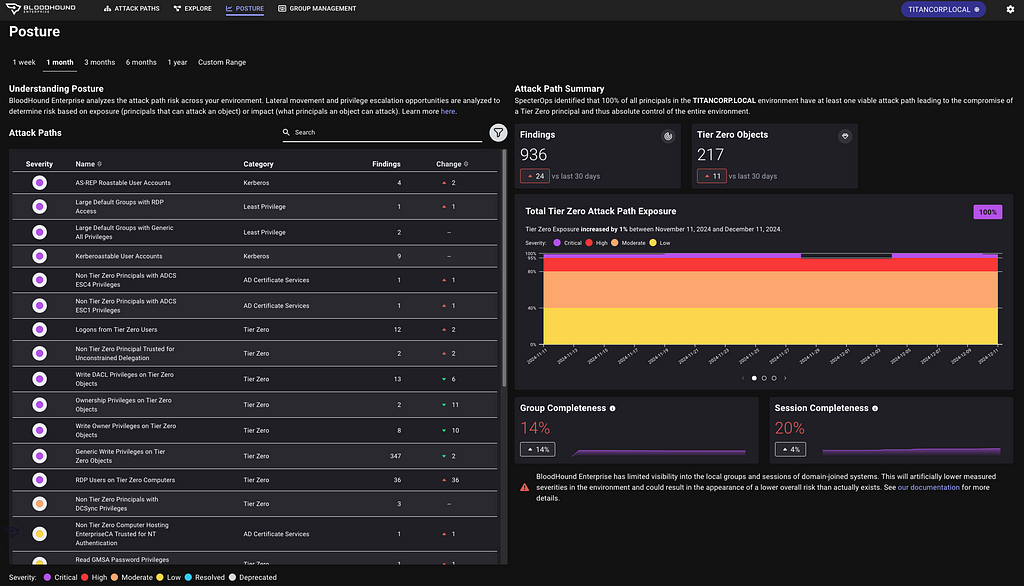

Here is a list of users who could compromise the sync account previously:

Previously, a privileged auth administrator or higher could change the password of the Sync account; however, since the sync agent would no longer successfully authenticate, it would break the functionality of the sync agent. This left only Global Administrator and Hybrid Identity Administrator as viable attack paths for a red teamer. Let’s look at the new pseudo-graph:

This update presents an attacker with the opportunity to add credentials without interrupting the normal day-to-day flow of the sync agent. In addition, it is far more common to have principals assigned the Application/Cloud Application administrator, making the attack surface larger for sync attacks. While tradecraft may have shifted for on-premises attackers, the Entra ID attack surface has expanded. In addition, Conditional Access typically doesn’t affect service principals, so the likelihood of being able to use these credentials off-target is significantly higher. Ultimately, this is a cleaner yet more abuse-prone implementation.

Detections

Here is the good news. Detecting a new credential on an Application Registration is easy and a dead giveaway that something interesting is happening. Since the normal flow of UpdateADSyncApplicationKey removes the old key, the existence of more than one certificate on the Entra Connect application registration is a good indication that something is amiss. Should an attacker choose to be stealthy and actually replace the certificate that the Entra Connect Sync agent uses, then there are still detections for credential manipulation on an application registration. Here is a KQL query that surfaced all of my key additions:

AuditLogs

| where ActivityDisplayName has_any ("Add service principal credentials", "Update application", "Add key credential")

| where TargetResources[0].type =~ "Application"

| extend AppName = tostring(TargetResources[0].displayName)

| extend ChangedProps = TargetResources[0].modifiedProperties

| extend Initiator = tostring(InitiatedBy.user.displayName)

| project TimeGenerated, AppName, ActivityDisplayName, Initiator, ChangedProps

| where ChangedProps has_any ("keyCredentials", "passwordCredentials")

Takeaways

This is a brand-new update for Entra Connect Sync, so I don’t expect to see it in the wild for some time. I’m not quite sure I’m sold on the ability for an application to “roll its own keys”, as the documentation states. If access to a key is equivalent to the ability to produce more keys, then what’s the point of an expiration date?

Update: Dumping Entra Connect Sync Credentials was originally published in Posts By SpecterOps Team Members on Medium, where people are continuing the conversation by highlighting and responding to this story.