We are entering a decade where software is no longer just an enabler of business — it is the primary mechanism through which intelligence is created, scaled and monetized across the enterprise.

For CIOs, this is not another technology cycle. This is a leadership inflection point.

Across boardrooms, investor discussions and strategic planning sessions, the conversation is shifting rapidly:

- From “How fast can we build software?”

- To “How intelligently can we design, govern and scale decision systems?”

This is a fundamental reframing of the CIO mandate.

The organizations that recognize this shift early will not just move faster — they will compound intelligence faster, creating asymmetric advantage in markets where speed alone is no longer sufficient.

The following perspective must therefore be read not as a technology trend, but as a strategic operating model shift for CIOs entering 2026 and beyond.

The next inflection point: Software development is no longer about code

Over the past two decades, software development has evolved through predictable phases — manual coding, agile acceleration, cloud-native scaling and DevOps automation. But as we enter 2026, that trajectory is no longer linear.

We are now witnessing a structural break.

Generative AI and agentic systems are not simply accelerating development — they are redefining the very nature of software creation, ownership and accountability.

This shift mirrors the broader transformation outlined in the CIO 3.0 paradigm, CXO 3.0: How intelligent leadership will redefine enterprise value, where technology leadership has moved from operating systems to architecting enterprise intelligence itself.

In software development, this translates into a fundamental question for boards, CIOs, CTOs, CISOs and chief AI officers (CAIOs): Are we still building software or are we now orchestrating intelligence systems that build themselves?

What makes this transition particularly consequential is that it is already happening quietly but decisively.

Across high-performing organizations:

- AI-generated code is already contributing meaningfully to production systems

- Development cycles are compressing from weeks to days — and in some cases, hours

- Decision-making is increasingly embedded directly into software systems rather than layered on top

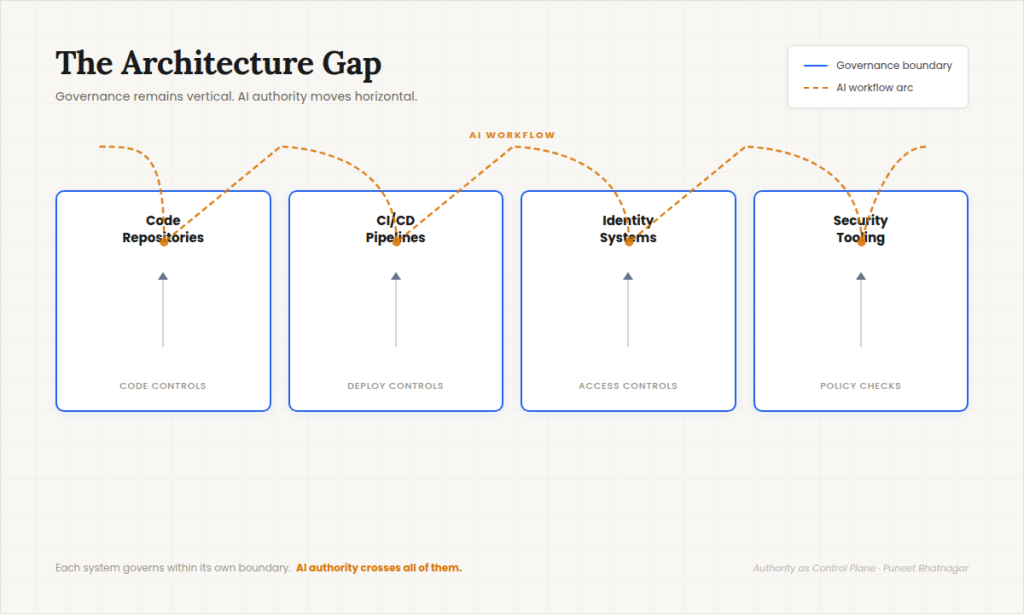

Yet, in many enterprises, governance, accountability and operating models have not kept pace.

This gap between capability acceleration and governance maturity is where both the greatest opportunity and the greatest risk now reside.

2 forces reshaping software development in 2026

1. AI across the full software development lifecycle (SDLC)

Generative AI has moved beyond coding assistance into end-to-end lifecycle orchestration, consistent with broader enterprise AI adoption trends where organizations are embedding AI across multiple functions (McKinsey State of AI: The state of AI in 2025: Agents, innovation and transformation):

- Planning & Design → AI-driven requirements synthesis, architecture generation

- Development → Code generation, refactoring, pattern enforcement

- Testing → Autonomous test case creation and validation

- Deployment → Intelligent CI/CD pipelines with adaptive optimization

- Maintenance → Self-healing systems, anomaly detection, auto-remediation

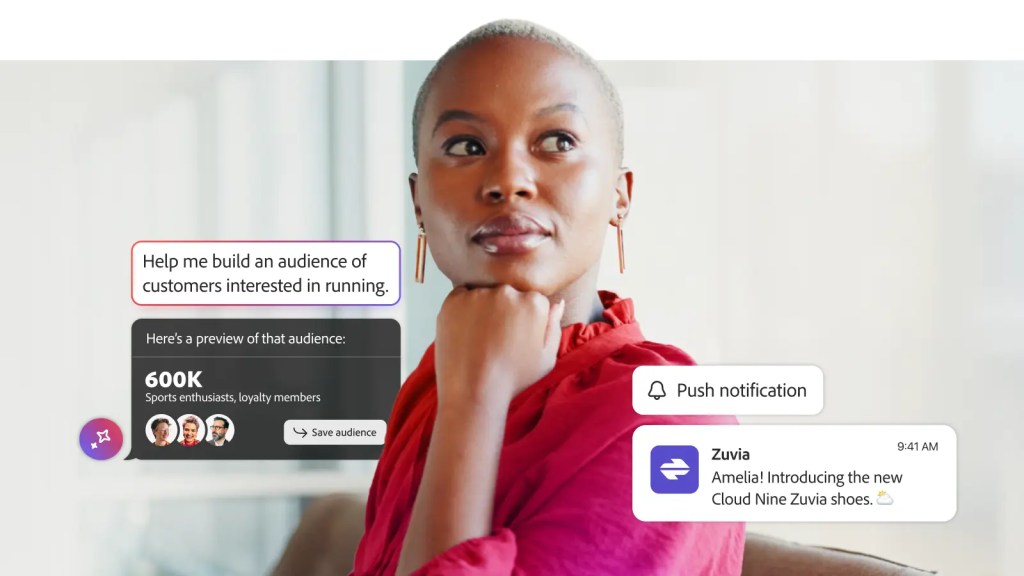

The developer is no longer just a coder. The developer is becoming a curator of intent, constraints and outcomes.

The compression of the SDLC

What historically required:

- Weeks of design

- Months of development

- Iterative testing cycles

Can now be orchestrated through multi-agent AI systems operating in parallel.

This introduces a new dynamic: Software development is no longer a sequential process — it is becoming a continuously adaptive system.

For CIOs, this means:

- Traditional governance checkpoints may become bottlenecks

- Legacy approval workflows may inhibit innovation velocity

- Organizational design must evolve alongside technical capability

2. Intensifying competition in AI coding ecosystems

The competitive landscape is accelerating rapidly, particularly across ecosystems led by:

- Microsoft (GitHub Copilot, Azure AI)

- Google (Gemini, Vertex AI, developer tooling)

- Apple (on-device AI, developer ecosystem integration)

Events like Google I/O and Microsoft Build are no longer just developer conferences—they are strategic battlegrounds for control over the future of software creation (Google I/O: Google I/O | Microsoft Build: Microsoft Build).

The stakes are clear:

- Whoever controls the AI development stack controls the next generation of digital economies

- Whoever defines the developer experience defines the innovation velocity of entire ecosystems

Platform gravity is becoming strategic gravity

The implication for CIOs is profound.

Choosing a development ecosystem is no longer a tooling decision — it is a strategic alignment decision that determines:

- Data gravity

- Talent alignment

- Innovation velocity

- Long-term vendor dependency

In effect: Your AI development platform choice is becoming your enterprise’s innovation ceiling.

From SDLC to IDLC: The rise of the Intelligent Development Lifecycle

Traditional SDLC frameworks are becoming obsolete.

In their place, a new paradigm is emerging: The Intelligent Development Lifecycle (IDLC)

This is not simply an evolution — it is a redefinition of how software is conceived, built and governed.

Key characteristics of IDLC:

- Intent-driven development: Developers define what and why, not just how

- Agentic execution: AI agents perform multi-step development tasks autonomously

- Continuous learning loops: Systems improve based on real-time feedback and usage patterns

- Embedded governance: Compliance, security and auditability are built into execution (NIST AI Risk Management Framework)

- Decision-centric architecture: The primary output is not code — it is decision capability

IDLC as a leadership operating model

IDLC is not just a development methodology.

It is an enterprise operating model for intelligence creation.

It changes:

- How teams are structured

- How accountability is defined

- How value is measured

For CIOs, adopting IDLC means shifting from:

- Managing delivery pipelines

- To governing decision supply chains

The emerging reality: Developers as intelligence orchestrators

As AI agents take over repetitive and even complex coding tasks, the developer role is undergoing a profound transformation.

From:

- Writing code line by line

- Debugging manually

- Managing environments

To:

- Designing system intent

- Governing AI agents

- Ensuring ethical and secure outcomes

- Orchestrating multi-agent collaboration

This is not a reduction in developer relevance.

It is an elevation of developer responsibility.

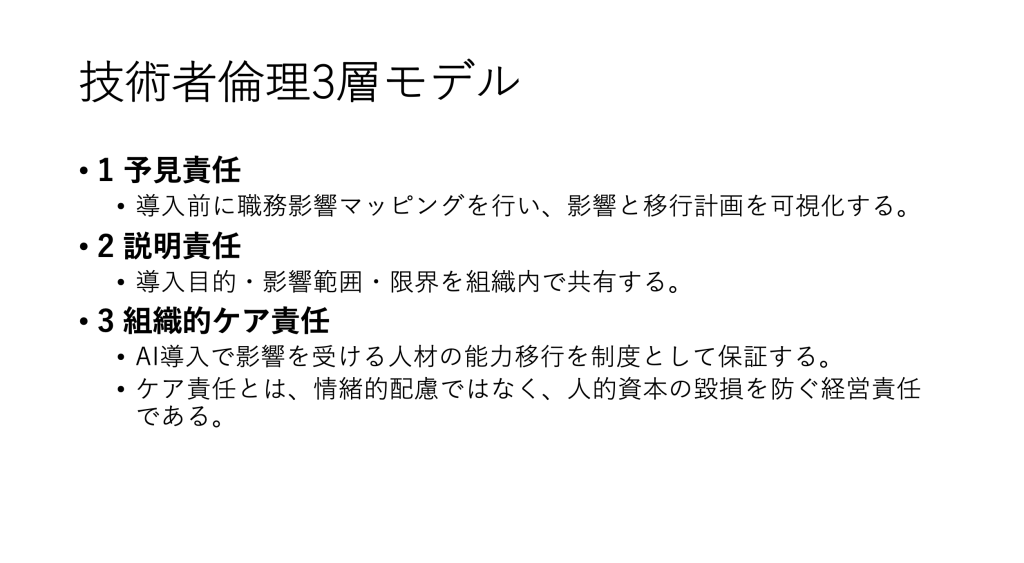

Talent transformation is now a CIO priority

This shift introduces a critical challenge:

Most current developer skill models are not aligned to this future state.

CIOs must now proactively invest in:

- AI-native engineering skills

- Prompt and intent engineering

- Model governance literacy

- Cross-disciplinary collaboration

Because the future developer is not just technical — they are decision designers.

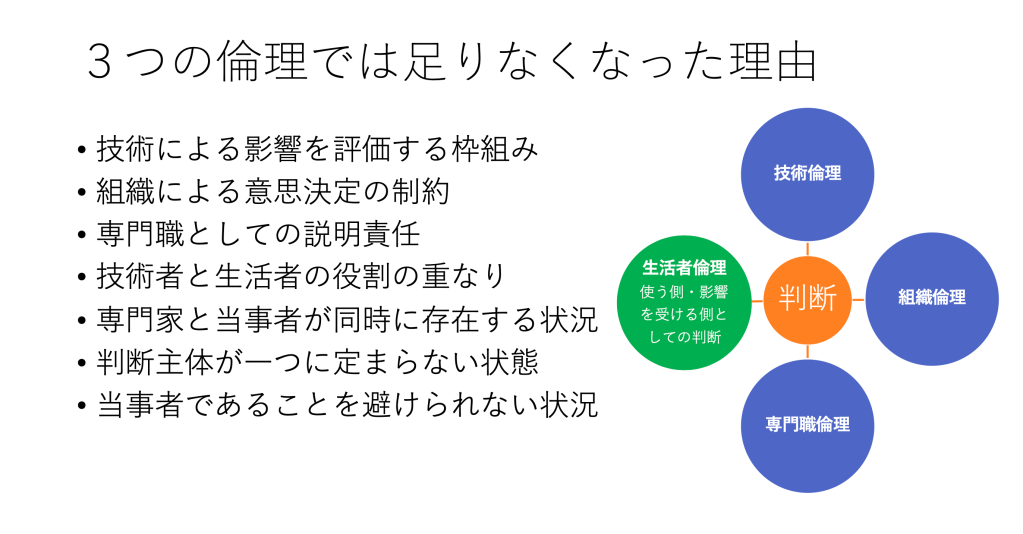

The CXO convergence: Why this is no longer just a CTO conversation

The transformation of software development is not confined to engineering teams.

It now sits at the intersection of four critical leadership domains, reflecting the broader evolution of CIOs into strategic business leaders shaping enterprise outcomes (State of the CIO: State of the CIO):

CIO: The intelligence architect

- Aligns AI-driven development with enterprise strategy

- Ensures scalability and integration across platforms

- Drives value realization from software investments

CTO: The innovation orchestrator

- Defines architecture patterns for AI-native development

- Leads platform engineering and developer experience

- Drives competitive differentiation

CISO: The trust enforcer

- Ensures secure AI-generated code

- Governs data lineage and model integrity

- Mitigates risks from autonomous systems

CAIO: The intelligence governor

This convergence reflects a broader reality: Software development is no longer a technical function — it is an enterprise risk, value and governance function.

Introducing a new framework: SAFE-AI DevOps

To navigate this transformation, enterprises require a disciplined, Board-ready approach.

SAFE-AI DevOps Framework (Secure, Adaptive, Federated, Explainable AI Development Operations)

This is a next-generation operating model for AI-driven software development.

1. Secure by Design (S)

- AI-generated code must meet zero-trust security principles

- Continuous vulnerability scanning integrated into AI pipelines

- Secure prompt engineering and model access controls

CISO-led mandate: Trust is the new runtime environment

2. Adaptive Intelligence (A)

- Systems learn and evolve continuously

- AI models adapt to changing requirements and environments

- Feedback loops drive improvement across lifecycle

CIO-led mandate: Learning velocity is the new productivity metric

3. Federated Development (F)

- Multi-agent collaboration across distributed environments

- Integration across cloud, edge and on-prem ecosystems

CTO-led mandate: Scale innovation without losing control

4. Explainable Execution (E)

- Every AI-generated decision must be traceable

- Audit trails for code generation and deployment

CAIO-led mandate: Explainability is the new compliance baseline

5. AI-Native DevOps (AI)

- Autonomous CI/CD pipelines

- Predictive deployment optimization

- Self-healing systems and automated incident response

Cross-CXO mandate: Automation is no longer optional — it is foundational

The competitive battlefield: Ecosystems, not tools

The next phase of competition is not about individual tools.

It is about ecosystem dominance, as hyper-scalers invest heavily in AI infrastructure, platforms and developer ecosystems (McKinsey Technology Strategy Insights: McKinsey Global Tech Agenda 2026).

Key battlegrounds:

- Developer platforms

- Model ecosystems

- Data gravity

- AI infrastructure

As highlighted in your CIO.com perspective, infrastructure itself is becoming a strategic intelligence decision, not just an operational one.

The risk dimension: AI-generated code is not inherently safe

While productivity gains are undeniable, risks are escalating:

- Hallucinated code vulnerabilities

- Licensing and IP violations

- Model bias and ethical concerns

- Regulatory exposure (EU AI Act, NIST AI RMF)

This creates a new category of risk: AI Development Risk

This requires structured governance aligned with emerging regulatory and risk frameworks (NIST AI RMF: AI Risk Management Framework).

Blockchain and quantum: The next convergence layer

As we move beyond 2026, two additional forces will reshape AI-driven development:

Blockchain

- Immutable audit trails for AI-generated code

- Smart contracts governing software execution

Quantum Computing

- Breakthroughs in optimization and cryptography

Together with AI, they form a converging intelligence stack that will redefine software engineering, consistent with broader enterprise transformation trends toward intelligent systems.

Boardroom implications: What investors and directors must understand

The shift to AI-driven development is not just technical — it is financial.

Research shows AI delivers the greatest impact when integrated into enterprise strategy rather than siloed initiatives (BankInfoSecurity: C-Suite Leaders Must Rewire Businesses for True AI Value).

Key board-level questions:

- How much of our software is AI-generated?

- What governance exists for AI-generated decisions?

- How do we ensure security and compliance at scale?

- What is our dependency on external AI ecosystems?

- How does this impact enterprise valuation?

Because the reality is: Software is no longer a cost center — it is a capital engine.

The new metrics: Measuring success in AI-driven development

Traditional metrics are insufficient.

Old metrics:

- Lines of code

- Development velocity

- Bug counts

New metrics:

- Decision throughput

- AI-assisted productivity ratio

- Model governance maturity

- Security incident reduction

- Time-to-intelligence (TTI)

The leadership mandate for 2026 and beyond

The transformation of software development demands a new leadership mindset.

Three defining mandates for 2026:

- Architect intelligence, not just applications

- Govern AI as an enterprise asset

- Align ecosystems with strategy

The future of software is a leadership decision

As we look ahead to 2026 and beyond, one reality becomes undeniable: The future of software development will not be decided by developers alone.

It will be shaped by:

- CIOs who architect intelligence

- CTOs who orchestrate innovation

- CISOs who enforce trust

- CAIOs who govern AI responsibly

- Boards that understand the strategic implications

Because in this new era, code is no longer the product. Intelligence is. And the organizations that learn fastest will not just build better software — they will redefine entire industries.

This article is published as part of the Foundry Expert Contributor Network.

Want to join?