Hypersonic Supply Chain Attacks: One Solution That Didn’t Need to Know the Payload

In 2026, the question for security leaders is not whether a supply chain attack is coming. Every serious organization should assume it is. The question is whether their defense architecture can stop a payload it has never seen before. It’s a question that takes on even more critical implications at a time where trusted agentic automation increasingly becomes the norm.

In three weeks this spring, three threat actors each ran a tier-1 supply chain attack against widely deployed software: LiteLLM, a core AI infrastructure package, Axios, the most downloaded HTTP client in the JavaScript ecosystem, and CPU-Z, a trusted system diagnostic tool. Different vectors, different actors, different techniques. SentinelOne® stopped all three on the same day each attack launched, with no prior knowledge of any payload.

The more important story is the how. Each attack arrived as a zero-day at the moment of execution. Each exploited a trusted delivery channel: an AI coding agent running with unrestricted permissions, a phantom dependency staged eighteen hours before detonation, a properly signed binary from an official vendor domain. No signature existed for any of them. No IOA matched.

SentinelOne stopped all three. That outcome is a direct answer to the question every security leader is now running against: What does your defense do when the attack arrives through a channel you explicitly trust, carrying a payload you have never seen before?

The AI Arms Race in Security is Underway

Adversaries are no longer running manual campaigns at human speed. In September 2025, Anthropic disclosed a Chinese state-sponsored group that jailbroke an AI coding assistant and ran a full espionage campaign against approximately 30 organizations. The AI handled 80–90% of tactical operations autonomously (i.e., reconnaissance, vulnerability discovery, exploit development, credential harvesting, lateral movement, exfiltration) with minimal human direction. Anthropic noted only 4–6 human decision points per campaign. The attack achieved limited success across those targets, but the trajectory is clear: AI is compressing the human bottleneck in offensive operations. Security programs designed around manual-speed adversaries are calibrating to a threat that is moving faster.

The LiteLLM attack is the clearest recent example of what this looks like inside an AI development workflow. On March 24, 2026, threat actor TeamPCP compromised the LiteLLM Python package by obtaining PyPI credentials through a prior supply chain compromise of Trivy, a widely-used open-source security scanner. Two malicious versions (1.82.7 and 1.82.8) were published. Any system with those versions during the exposure window executed the embedded credential theft payload automatically. In one confirmed detection, an AI coding agent running with unrestricted permissions (claude --dangerously-skip-permissions) auto-updated to the infected version without human review — no approval, no alert, no visible action before the payload ran. SentinelOne detected and blocked the malicious Python execution on the same day across multiple environments. Most organizations running AI development workflows didn’t know they were exposed until after the fact. The gap where human review processes don’t reach is wide, and it grows with every AI agent added to a pipeline.

Security programs were built for a different adversary. Vulnerability management, triage queues, patch cadences: all of it assumes an attacker who moves at a pace where human response can still close the window. This year’s SentinelOne Annual Threat Report documented what happens when that assumption breaks: adversaries are shifting left, embedding malicious logic in the build process before software ever reaches production. Likewise, the Verizon 2025 Data Breach Investigations Report found that edge device vulnerabilities are now being mass-exploited at or before the day of CVE publication, while organizations take a median of 32 days to patch them. The old model worked when it was designed. Attackers just weren’t running AI yet.

Three Attacks, One Common Failure Mode

Each attack ran through the same gap. Authorization was treated as a sufficient security boundary, and when authorization is automated, that assumption has no floor.

An AI agent with install permissions doesn’t stop to ask whether a package looks right. It installs. Trusted source, valid credentials, done. Supply chain attacks have always exploited trusted delivery channels, but a human at the keyboard introduces at least one friction point: Someone might notice something off, slow down, ask a question. Agents don’t do that. They execute at the speed of the next API call. When you give an agent install permissions, you’ve extended your trust model to cover everything it will ever run. Authorized agents execute exactly what their permissions allow. That’s the design. Treating permission as a proxy for safety is what turns a compromised supply chain hypersonic.

LiteLLM was compromised via credentials stolen through Trivy, a security scanner. The Axios attacker bypassed every npm security control the project had in place by exploiting a legacy access token the maintainers had forgotten to revoke. The CPUID attackers went after the vendor’s distribution infrastructure directly, so anyone who downloaded from the official website got a properly signed binary with a payload inside. In all three cases, the identity was legitimate. The intent wasn’t.

SentinelOne’s Annual Threat Report named the failure precisely: “The identity is verified, but the intent has been subverted, rendering traditional access controls ineffective against the resulting supply chain contamination.” Signature libraries, IOA rule sets, reputation lookups: All of them check authorization. None check intent. These attacks were designed to exploit exactly that. When the authorization model runs automatically, so does the exposure.

What Actually Stopped Them

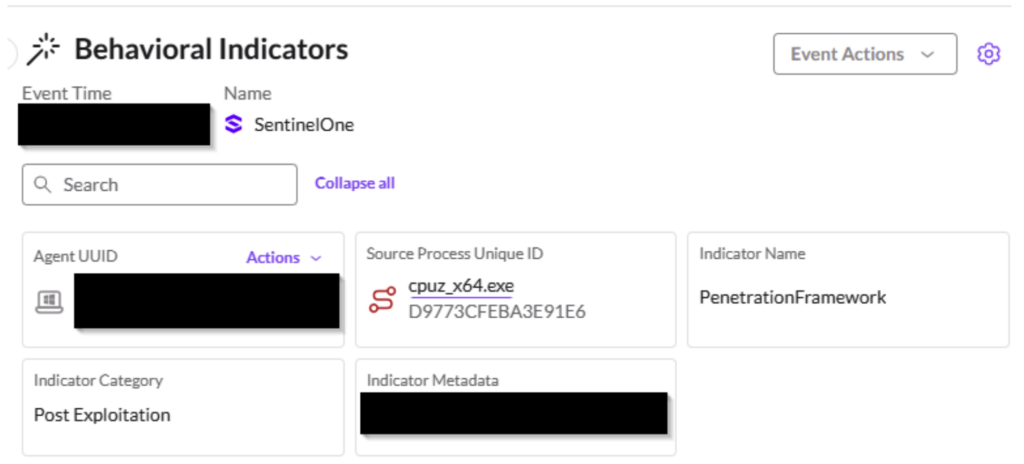

In each incident, SentinelOne’s on-device behavioral AI flagged the execution pattern, not a known signature or hash for that specific attack.

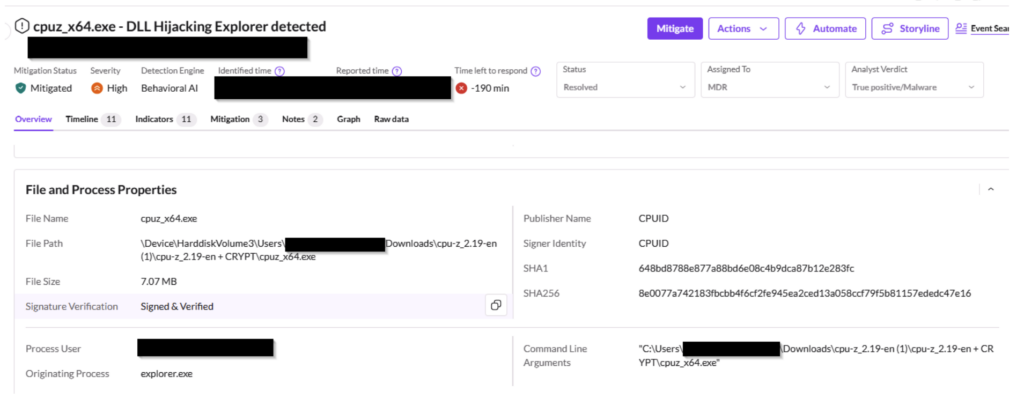

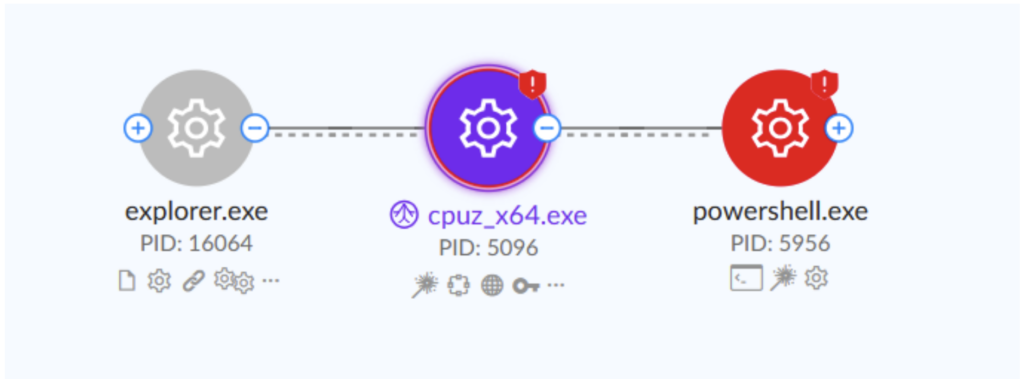

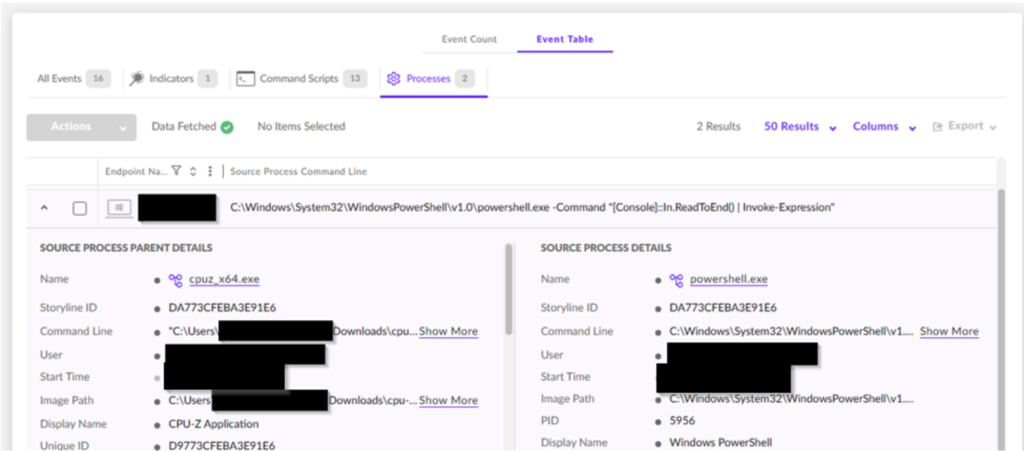

The LiteLLM detection flagged a Python interpreter executing Base64-decoded code in a spawned subprocess. SentinelOne killed the process preemptively, terminating 424 related events in under 44 seconds, before any human was in a position to observe it. The Axios detection, via the Lunar behavioral engine, caught PowerShell executing under a renamed binary from a non-standard path. The engine flagged the technique regardless of what the payload contained. The first infection occurred 89 seconds after the malicious package went live; the behavioral detection fired on the same day of publication. The CPU-Z detection flagged cpuz_x64.exe building an anomalous process chain: spawning PowerShell, which spawned csc.exe, which spawned cvtres.exe. CPU-Z does not do that. The platform terminated the execution chain mid-attack during a 19-hour active distribution window.

This is the operational output of Autonomous Security Intelligence (ASI), the intelligence fabric built into the Singularity Platform. ASI runs on-device at the edge as part of the core architecture. It is already running when the attack starts, killing the process before the threat can escalate.

Platform. ASI runs on-device at the edge as part of the core architecture. It is already running when the attack starts, killing the process before the threat can escalate.

Where customers had SentinelOne fully deployed with the right policies enabled, they were covered. Where they did not, they were exposed, and with average ransomware recovery costs exceeding $4M per incident, that exposure has a real price. If you are not certain your deployment matches the configuration that stopped these three attacks, that certainty is worth getting.

AI to Fight AI

This is the product reality behind the thesis SentinelOne brought to RSAC: AI to fight AI. A machine-speed adversary requires a machine-speed defense. That is an architectural requirement, not a positioning statement. ASI monitors behavioral patterns at the point of execution and kills the process when something deviates, at machine speed, without waiting for a human to write a query or approve a kill.

According to an IDC study, organizations using SentinelOne’s AI platform identify threats 63% faster and remediate 55% faster than legacy solutions, neutralizing 99% of threats without a single manual step. For organizations in regulated industries (healthcare, financial services, manufacturing, critical infrastructure), the stakes compound beyond breach cost. An exposure window that stays open through manual investigation is a potential regulatory notification event, an audit finding, and a conversation the CISO has with the board under circumstances no one wants. The difference between a stopped attack and an active breach is whether the architecture acts before the attacker establishes persistence. By the time a human analyst approves the kill, redundant persistence mechanisms may already be installed. The CPU-Z attack deployed three of them specifically because partial cleanup leaves the payload operational.

Human-driven workflows, manual validation, and legacy tooling cannot keep pace with that attack cadence. When defense relies on investigation before action, the advantage shifts to the adversary. The gap is in the architecture. You cannot tune your way out of it.

Conclusion | The Only Question That Matters

SentinelOne’s latest Annual Threat Report documented the pattern these three attacks confirm: Adversaries are “shifting left” by integrating malicious logic into the build process itself, compromising software before it reaches production. It is the current operating model of advanced threat actors, and it is accelerating.

Three attacks. Three detections. Three outcomes, all in a matter of weeks. The architecture that survived them is real-time, AI-native, and built into the edge.

The question every security leader should be able to answer: Could your current solution have stopped LiteLLM, Axios, and CPU-Z autonomously, on the day of each attack, with no prior knowledge of any payload?

If the answer depends on a signature update, a cloud verdict, a manual investigation step, or a policy that wasn’t enabled, that is your answer.

Read the full technical breakdown of each incident:

- How SentinelOne Stopped the LiteLLM Supply Chain Attack

- Securing the Supply Chain: The Axios Attack

- How SentinelOne Blocked the CPU-Z Watering Hole Attack

Third-Party Trademark Disclaimer:

All third-party product names, logos, and brands mentioned in this publication are the property of their respective owners and are for identification purposes only. Use of these names, logos, and brands does not imply affiliation, endorsement, sponsorship, or association with the third-party.