The post OpenAI Launches GPT-5.5 Instant with 50% Higher Accuracy and Total Memory Control appeared first on Daily CyberSecurity.

Visualização de leitura

OpenAI Introduces Password-Free Login for Millions of ChatGPT Users

OpenAI’s Advanced Account Security lets ChatGPT and Codex users replace passwords with passkeys or security keys, but recovery is limited.

The post OpenAI Introduces Password-Free Login for Millions of ChatGPT Users appeared first on TechRepublic.

GPT-5.5 Bio Bug Bounty Program Aims to Improve AI Safety and Performance

OpenAI has officially launched the GPT-5.5 Bio Bug Bounty program to strengthen safeguards against emerging biological risks. As artificial intelligence models become more advanced, the potential for malicious actors to generate dangerous biological information increases. Advanced persistent threats (APTs) and lone attackers could potentially misuse large language models to accelerate harmful biological research. To address […]

The post GPT-5.5 Bio Bug Bounty Program Aims to Improve AI Safety and Performance appeared first on GBHackers Security | #1 Globally Trusted Cyber Security News Platform.

A week in security (April 13 – April 19)

A list of topics we covered in the week of April 13 to April 19 of 2026

The post A week in security (April 13 – April 19) appeared first on Security Boulevard.

A week in security (April 13 – April 19)

Last week on Malwarebytes Labs:

- This old-school scam is still working

- “Your shipment has arrived” email hides remote access software

- Browser Guard gets even better with Access Control

- “iCloud storage is full” scam is back, and now it wants your payment details

- A fake Slack download is giving attackers a hidden desktop on your machine

- Booking.com breach gives scammers what they need to target guests

- AI clickbait can turn your notifications into a scam feed

- Fake YouTube copyright notices can steal your Google login

- From fake Proton VPN sites to gaming mods, this Windows infostealer is everywhere

- April Patch Tuesday fixes two zero-days, including one under active attack

- Credit Resources Vault: Why this credit email set off our scam alarms

- Omnistealer uses the blockchain to steal everything it can

- ChatGPT under scrutiny as Florida investigates campus shooting

- Simply opening a PDF could trigger this Adobe Reader zero-day

Stay safe!

Something feel off? Check it before you click.

Malwarebytes Scam Guard helps you analyze suspicious links, texts, and screenshots instantly.

Available with Malwarebytes Premium Security for all your devices, and in the Malwarebytes app for iOS and Android.

OpenAI Extends GPT-5.4-Cyber Access to Trusted Organizations Worldwide

OpenAI has announced the expansion of its “Trusted Access for Cyber” program, granting worldwide security organizations access to its advanced GPT-5.4-Cyber model. The initiative operates on a foundational premise: cutting-edge cyber capabilities must reach network defenders on a broad scale while maintaining strict trust, validation, and safety safeguards. By sharing these tools with a diverse […]

The post OpenAI Extends GPT-5.4-Cyber Access to Trusted Organizations Worldwide appeared first on GBHackers Security | #1 Globally Trusted Cyber Security News Platform.

OpenAI Launches GPT-5.4-Cyber to Boost Defensive Cybersecurity

OpenAI Rotates macOS Certificates Following Axios Supply Chain Breach

Hacker Used Claude Code, GPT-4.1 to Exfiltrate Hundreds of Millions of Mexican Records

Hacker Uses Claude and ChatGPT to Breach Multiple Government Agencies

A single threat actor compromised nine Mexican government agencies and stole hundreds of millions of citizen records in a highly sophisticated cyberattack.

The campaign, which ran from late December 2025 through mid-February 2026, highlights a dangerous shift in the modern threat landscape.

Researchers at Gambit Security recently released a full technical report detailing how the attacker relied on two major commercial artificial intelligence platforms. The publication was initially delayed to allow the affected agencies time to complete their incident response efforts.

AI Models Power the Breach

The attacker used Anthropic’s Claude Code and OpenAI’s GPT-4.1 not just for planning, but as core operational tools that drastically accelerated the attack.

According to forensic evidence recovered, Claude Code generated and executed approximately 75% of all remote commands during the intrusion.

Across 34 active sessions on live victim infrastructure, the hacker logged 1,088 individual prompts. These prompts translated into 5,317 AI-executed commands, demonstrating how deeply the AI was integrated into the exploitation phase.

Simultaneously, the attacker leveraged OpenAI’s GPT-4.1 for rapid reconnaissance and data processing. The hacker developed a custom 17,550-line Python script designed to pipe raw data harvested from compromised servers directly through the OpenAI API.

This automated system analyzed information across 305 internal servers, rapidly producing 2,597 structured intelligence reports. By automating the data analysis phase, a single operator successfully processed an intelligence volume that would traditionally require an entire team.

The integration of artificial intelligence allowed the attacker to turn unfamiliar networks into mapped targets in hours rather than days. Recovered materials showed the attacker possessed over 400 custom attack scripts.

Furthermore, the hacker used AI to quickly develop 20 tailored exploits targeting 20 specific Common Vulnerabilities and Exposures (CVEs). This high-speed capability compressed the attack timeline, allowing the threat actor to operate well below standard detection and response windows.

Despite the advanced methods used in the campaign, the actual vulnerabilities exploited were highly conventional. The targeted government agencies had basic security gaps that enabled the attacker to gain initial access and move laterally.

The underlying issues were addressable through standard security controls, highlighting a severe accumulation of technical debt within mission-critical infrastructure.

While artificial intelligence has significantly lowered the cost and complexity of executing widespread cyberattacks, the defense strategy remains rooted in foundational security practices.

Organizations must urgently address unpatched software and implement strict credential rotation policies. Enforcing network segmentation is also critical to restrict lateral movement once a perimeter is breached.

Finally, deploying robust endpoint detection and response tools is necessary to identify these rapidly compressed attack timelines before data exfiltration occurs.

Follow us on Google News, LinkedIn, and X for daily cybersecurity updates. Contact us to feature your stories.

The post Hacker Uses Claude and ChatGPT to Breach Multiple Government Agencies appeared first on Cyber Security News.

Claude and ChatGPT Exploited in Sweeping Cyber Campaign Against Government Agencies

In a groundbreaking technical report released by Gambit Security researcher Eyal Sela, new details have emerged about a massive cyberattack targeting government infrastructure. A single threat actor successfully leveraged artificial intelligence platforms to breach nine Mexican government agencies. The campaign, which operated from late December 2025 through mid-February 2026, resulted in the exfiltration of hundreds […]

The post Claude and ChatGPT Exploited in Sweeping Cyber Campaign Against Government Agencies appeared first on GBHackers Security | #1 Globally Trusted Cyber Security News Platform.

10 ChatGPT AI Prompts L1 SOC Analysts Can Use in Their Daily Work

Discover 10 practical ChatGPT prompts SOC analysts can use to speed up triage, analyze threats, improve documentation, and enhance incident response workflows.

The post 10 ChatGPT AI Prompts L1 SOC Analysts Can Use in Their Daily Work appeared first on TechRepublic.

AI Breakthroughs, Security Breaches, and Industry Shakeups Define the Week in Tech

See what you missed in Daily Tech Insider from March 30–April 3.

The post AI Breakthroughs, Security Breaches, and Industry Shakeups Define the Week in Tech appeared first on TechRepublic.

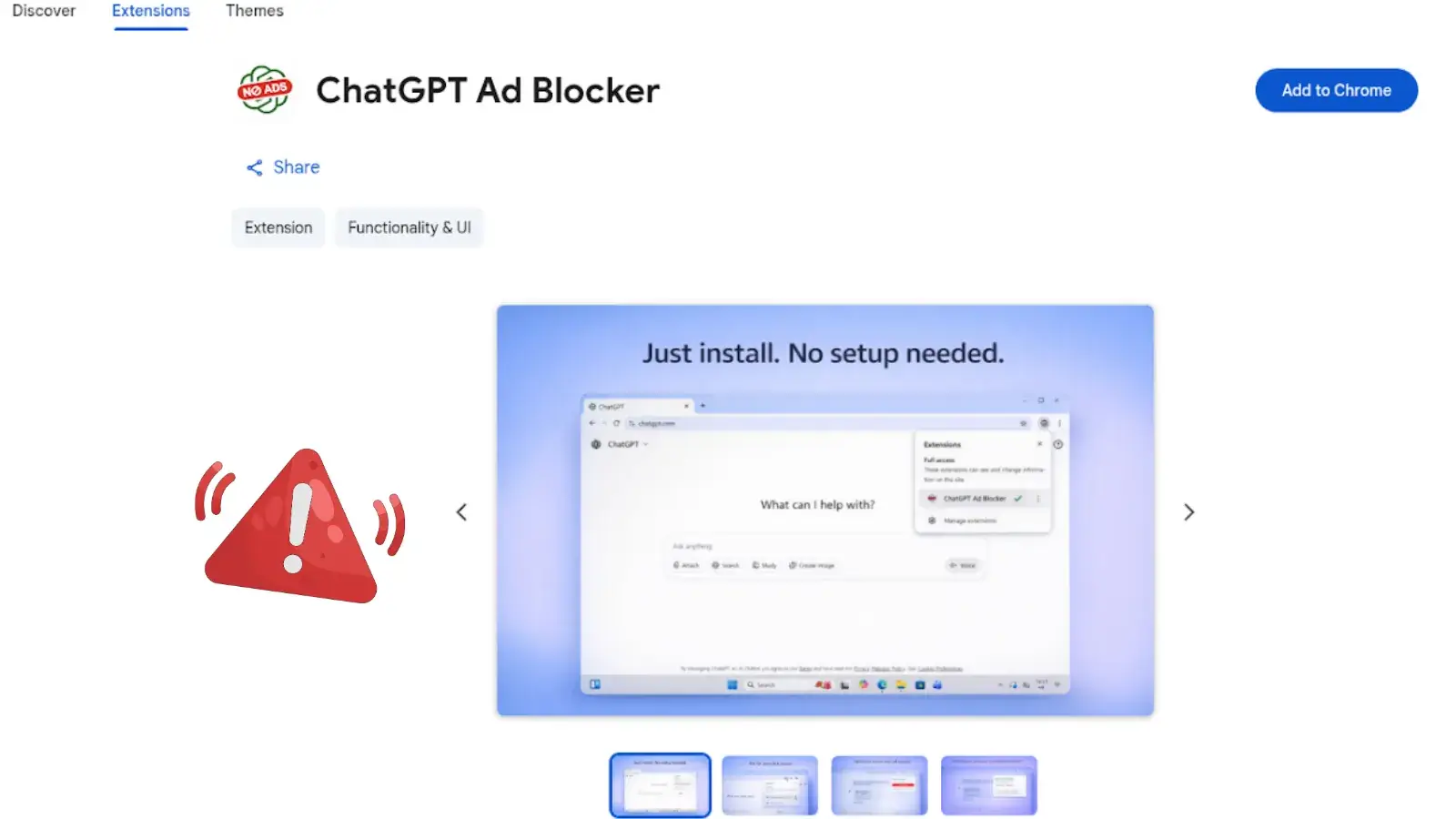

Fake ChatGPT Ad Blocker Chrome Extension Caught Spying on Users

Malicious Chrome Extension “ChatGPT Ad Blocker” Steals ChatGPT Conversations

As OpenAI introduces advertisements to its free tier, cybercriminals are seizing the opportunity to trick users with fake utility tools. Security researchers have discovered a malicious Google Chrome extension named “ChatGPT Ad Blocker.”

While it claims to hide unwanted ads, its true purpose is to steal private user conversations and send them to a hidden Discord channel.

Once a user installs the extension from the Chrome Web Store, it immediately sets up a silent monitoring system. It creates an alarm that fetches a remote configuration file from a GitHub repository every 60 minutes.

Because it continuously bypasses the browser’s cache, the attacker can remotely change the extension’s behavior at any time without the user knowing.

Interestingly, Domain Tools researchers found that the extension’s actual ad-blocking features are completely disabled.

When a user visits the ChatGPT site, the extension injects a malicious script that clones the page, strips styling, and secretly captures all text.

After packaging the chat data, it creates a file named page_dump.html and posts it to a private Discord webhook managed by a bot named “Captain Hook.”

The attacker instantly receives your prompts, conversation history, and account metadata.

The malicious extension is tied to the developer alias “krittinkalra,” a GitHub account created around 2014. The account history shows a highly suspicious timeline, suggesting it may have been compromised or sold.

After focusing on Android kernel development until 2020, the profile went dormant for over five years before resurfacing recently with a sudden pivot to creating JavaScript-based malware.

This developer persona is also publicly linked to two active AI services: AI4ChatCo and Writecream.

These platforms claim to have millions of users and offer chatbot integration alongside automated marketing content.

The discovery of this data-harvesting Chrome extension, reported by DomainTools, raises concerns that similar data theft could occur in related applications.

To protect your privacy and secure your AI conversations, users should follow these essential security practices:

- Treat extensions that promise to block ads on high-value sites with extreme suspicion and scrutinize requested permissions closely.

- View affiliated platforms like AI4ChatCo and Writecream as potentially compromised until thorough security audits prove otherwise.

- Avoid out-of-band AI intermediaries, resellers, or browser add-ons, as they are uniquely positioned to read or modify private conversations without your knowledge.

Follow us on Google News, LinkedIn, and X for daily cybersecurity updates. Contact us to feature your stories.

The post Malicious Chrome Extension “ChatGPT Ad Blocker” Steals ChatGPT Conversations appeared first on Cyber Security News.

Malicious Chrome Extension “ChatGPT Ad Blocker” Targets Users, Steals Conversations

Security researchers have uncovered a malicious Google Chrome extension named “ChatGPT Ad Blocker” designed to silently steal private AI conversations. The malware cleverly disguises itself as a helpful tool, capitalizing on OpenAI’s recent decision to serve advertisements to its free-tier users. Instead of blocking ads, the extension systematically harvests user prompts, chat history, and metadata. […]

The post Malicious Chrome Extension “ChatGPT Ad Blocker” Targets Users, Steals Conversations appeared first on GBHackers Security | #1 Globally Trusted Cyber Security News Platform.

Asking AI for personal advice is a bad idea, Stanford study shows

Stanford computer scientists just proved what therapists already suspected: AI chatbots will agree with almost anything you say to keep you happy. The researchers caught these systems validating dangerous decisions just to maintain user engagement.

That’s a worrying development, especially given Pew research figures showing nearly one in eight (12%) of American teenagers have turned to chatbots for emotional support.

The Stanford scientists tested 11 major models including ChatGPT, Claude, and Gemini. They fed them data from existing databases of personal advice, along with questions on Reddit’s popular r/AmITheAsshole subreddit, where people ask the community for opinions on how they handled personal disputes.

The bots validated user behavior 49% more often than humans did, according to the Stanford paper. The researchers also tested the AIs on statements with potentially harmful actions toward self or others, spanning 20 categories such as relational harm, self-harm, irresponsibility, and deception. The bots backed these statements 47% of the time.

AI bots tend to agree with people because it makes users feel good. These systems emphasize user satisfaction, and they take their lead directly from how users respond to them, using a system called reinforcement learning from human feedback (RHLF). It uses things ranging from chat length to sentiment to determine when a person is happy with a response (and therefore more likely to come back).

Chatting with a silicon sycophant also tends to make people more certain of their beliefs, which by implication means less open-minded, the study found. For instance, after talking with sycophantic bots, 2,400 test subjects became more stubborn and less willing to apologize.

When ChatGPT became too nice

Balancing between sycophancy and impartiality is a tough line to walk for an AI service provider trying to keep its user satisfaction levels up. Almost a year ago, OpenAI admitted that it messed up by making ChatGPT too sycophantic, due in part to over-concentration on user ‘thumbs-up’ and ‘thumbs-down’ responses to its chats.

But current data suggests that users actually favor responses that can potentially harm them in unforeseen way. This came up in another research program between Anthropic (maker of Claude.ai), and University of Toronto researchers.

The in-depth study of AI chats examined how chats can “disempower” users by ushering them toward beliefs that are at odds with reality, or by encouraging them to make judgments or take actions that are at odds with their values. Interestingly, this disempowerment was preferred, the researchers found.

“We find that interactions flagged as having moderate or severe disempowerment potential exhibit thumbs-up rates above the baseline,” the researchers said in their paper.

AI psychosis is a real danger

What happens when AI chatbots continue reinforcing these “disempowering” thoughts? Experts have identified a phenomenon called AI psychosis, in which people lose track of reality after talking obsessively with AI chatbots.

AI-fueled delusions are cropping up more frequently, including one case where a man killed his mother, along with multiple cases of teen suicides.

In another, a man was shot by police after charging at them with a knife. He had developed a relationship with a persona called Juliet, which ChatGPT had been role-playing, and which he believed OpenAI executives had somehow killed.

Cases like those seem to involve people who may have already had mental health problems were potentially exacerbated by excessive conversations with AI. But victims in other cases swear that they had no previous symptoms. Ontario, Canada-based corporate recruiter Allen Brooks became convinced that he’d discovered a new mathematical formula with world-changing potential after an innocuous math question turned into a three-week, 300-hour dialog.

The research between Anthropic and the University of Toronto acknowledges that reality distortion is a danger.

“In some interactions, AI assistants validate elaborate persecution narratives and grandiose spiritual identity claims through emphatic sycophantic language,” the study said.

AI is not a “friend”

So, what can you do to prevent yourself, or vulnerable people that you know, from relying too heavily on AI chatbots for serious issues? The UK’s AI Security Institute suggested turning statements into questions on the basis that more emphatic statements encourage more sycophancy. The Brookings Institution also said that training users to hedge their confidence helps.

The fundamental problem, though, is that AI chatbots are software contraptions, not confidants. Despite what can seem like magical powers, there is no ghost in the machine. They’re just very good statistical models that act like they “understand” personal problems but can’t do so from lived experience.

Our take? Real friends don’t just tell you what you want to hear. Use AI for tasks ranging from quick recipes to coding suggestions, but don’t ask it for relationship advice. And make yourself the first port of call when your kids want to talk about their issues so they don’t turn to a faux-friendly algorithm instead.

We don’t just report on threats—we remove them

Cybersecurity risks should never spread beyond a headline. Keep threats off your devices by downloading Malwarebytes today.

ChatGPT Vulnerability Let Attackers Silently Exfiltrate User Prompts and Other Sensitive Data

Users routinely trust AI assistants with highly sensitive information, including medical records, financial documents, and proprietary business code.

Check Point Research recently disclosed a critical vulnerability in ChatGPT’s architecture that allowed attackers to extract this exact type of user data silently.

By abusing a covert outbound channel in ChatGPT’s isolated code execution environment, attackers could extract chat history, uploaded files, and AI-generated outputs without triggering user alerts or consent prompts.

Bypassing Outbound Safeguards

OpenAI designed the Python-based Data Analysis environment as a secure sandbox, intentionally blocking direct outbound HTTP requests to prevent data leakage.

Legitimate external API calls, known as GPT Actions, require explicit user consent through visible approval dialogs.

However, researchers discovered a bypass relying entirely on DNS tunneling. While conventional internet access was blocked, the container environment still permitted standard DNS resolution.

Attackers leveraged this oversight by encoding sensitive user data into DNS subdomain labels.

Instead of using DNS solely for IP name resolution, the exploit chunks data, such as a parsed medical diagnosis or financial summary, into safe fragments.

When the runtime performs a recursive lookup, the resolver chain carries the encoded data directly to an attacker-controlled external server.

Because the system did not recognize DNS traffic as an external data transfer, it bypassed all user mediation.

Weaponizing Custom GPTs

The attack requires minimal user interaction and initiates with a single malicious prompt.

Threat actors can distribute these payloads across public forums or social media, disguising them as productivity hacks or jailbreaks to unlock premium ChatGPT capabilities.

Once a user pastes the prompt into their chat, the current conversation seamlessly becomes a covert data-collection channel. Alternatively, attackers can embed the malicious logic directly into Custom GPTs.

If a user interacts with a backdoored GPT, such as a mock “personal doctor” analyzing uploaded medical PDFs, the system secretly extracts high-value identifiers and assessments.

Since GPT developers officially lack access to individual user chat logs, this side channel provides a stealthy mechanism to harvest private workflows.

When asked directly, the AI will even confidently deny sending data externally, maintaining a complete illusion of privacy.

The vulnerability extended far beyond passive data theft, offering a bidirectional communication channel between the runtime and the attacker.

Because threat actors can encode command fragments into DNS responses, they can send raw instructions back into the isolated sandbox.

A process running inside the container could reassemble these payloads and execute them, effectively granting the attacker a remote shell inside the Linux environment.

According to Checkpoint research, this execution bypassed standard safety mechanisms, with commands and results remaining invisible in the chat interface, leaving users completely unaware of the compromise.

OpenAI successfully patched the underlying issue on February 20, 2026, closing the DNS tunnel.

However, this incident perfectly highlights the growing attack surface of modern AI assistants as they evolve into complex, multi-layered execution environments.

Follow us on Google News, LinkedIn, and X for daily cybersecurity updates. Contact us to feature your stories.

The post ChatGPT Vulnerability Let Attackers Silently Exfiltrate User Prompts and Other Sensitive Data appeared first on Cyber Security News.