Human communication is multimodal. We receive information in many different ways, allowing our brains to see the world from various angles and turn these different “modes” of information into a consolidated picture of reality.

We’ve now reached the point where artificial intelligence (AI) can do the same, at least to a degree. Much like our brains, multimodal AI applications process different types — or modalities — of data. For example, OpenAI’s ChatGPT 4.0 can reason across text, vision and audio, granting it greater contextual awareness and more humanlike interaction.

However, while these applications are clearly valuable in a business environment that’s laser-focused on efficiency and adaptability, their inherent complexity also introduces some unique risks.

According to Ruben Boonen, CNE Capability Development Lead at IBM: “Attacks against multimodal AI systems are mostly about getting them to create malicious outcomes in end-user applications or bypass content moderation systems. Now imagine these systems in a high-risk environment, such as a computer vision model in a self-driving car. If you could fool a car into thinking it shouldn’t stop even though it should, that could be catastrophic.”

Multimodal AI risks: An example in finance

Here’s another possible real-world scenario:

An investment banking firm uses a multimodal AI application to inform its trading decisions, processing both textual and visual data. The system uses a sentiment analysis tool to analyze text data, such as earnings reports, analyst insights and news feeds, to determine how market participants feel about specific financial assets. Then, it conducts a technical analysis of visual data, such as stock charts and trend analysis graphs, to offer insights into stock performance.

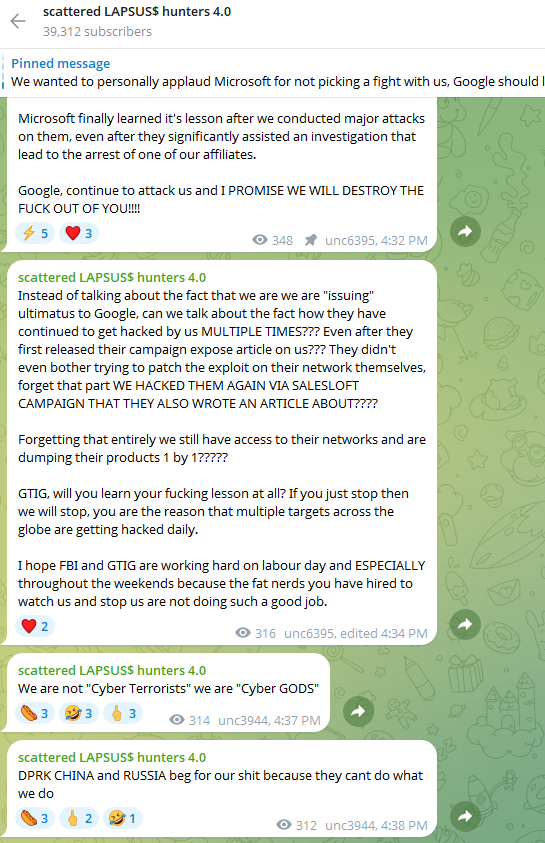

An adversary, a fraudulent hedge fund manager, then targets vulnerabilities in the system to manipulate trading decisions. In this case, the attacker launches a data poisoning attack by flooding online news sources with fabricated stories about specific markets and financial assets. Next, they launch an adversarial attack by making pixel-level manipulations — known as perturbations — to stock performance charts that are imperceptible to the human eye but enough to exploit the AI’s visual analysis abilities.

The result? Due to the manipulated input data and false signals, the system recommends buying orders at artificially inflated stock prices. Unaware of the exploit, the company follows the AI’s recommendations, while the attacker, holding shares in the target assets, sells them for an ill-gotten profit.

Getting there before adversaries

Now, let’s imagine that the attack wasn’t really carried out by a fraudulent hedge fund manager but was instead a simulated attack by a red team specialist with the goal of discovering the vulnerability before a real-world adversary could.

By simulating these complex, multifaceted attacks in safe, sandboxed environments, red teams can reveal potential vulnerabilities that traditional security systems are almost certain to miss. This proactive approach is essential for fortifying multimodal AI applications before they end up in a production environment.

According to the IBM Institute of Business Value, 96% of executives agree that the adoption of generative AI will increase the chances of a security breach in their organizations within the next three years. The rapid proliferation of multimodal AI models will only be a force multiplier of that problem, hence the growing importance of AI-specialized red teaming. These specialists can proactively address the unique risk that comes with multimodal AI: cross-modal attacks.

Cross-modal attacks: Manipulating inputs to generate malicious outputs

A cross-modal attack involves inputting malicious data in one modality to produce malicious output in another. These can take the form of data poisoning attacks during the model training and development phase or adversarial attacks, which occur during the inference phase once the model has already been deployed.

“When you have multimodal systems, they’re obviously taking input, and there’s going to be some kind of parser that reads that input. For example, if you upload a PDF file or an image, there’s an image-parsing or OCR library that extracts data from it. However, those types of libraries have had issues,” says Boonen.

Cross-modal data poisoning attacks are arguably the most severe since a major vulnerability could necessitate the entire model being retrained on an updated data set. Generative AI uses encoders to transform input data into embeddings — numerical representations of the data that encode relationships and meanings. Multimodal systems use different encoders for each type of data, such as text, image, audio and video. On top of that, they use multimodal encoders to integrate and align data of different types.

In a cross-modal data poisoning attack, an adversary with access to training data and systems could manipulate input data to make encoders generate malicious embeddings. For example, they might deliberately add incorrect or misleading text captions to images so that the encoder misclassifies them, resulting in an undesirable output. In cases where the correct classification of data is crucial, as it is in AI systems used for medical diagnoses or autonomous vehicles, this can have dire consequences.

Red teaming is essential for simulating such scenarios before they can have real-world impact. “Let’s say you have an image classifier in a multimodal AI application,” says Boonen. “There are tools that you can use to generate images and have the classifier give you a score. Now, let’s imagine that a red team targets the scoring mechanism to gradually get it to classify an image incorrectly. For images, we don’t necessarily know how the classifier determines what each element of the image is, so you keep modifying it, such as by adding noise. Eventually, the classifier stops producing accurate results.”

Vulnerabilities in real-time machine learning models

Many multimodal models have real-time machine learning capabilities, learning continuously from new data, as is the case in the scenario we explored earlier. This is an example of a cross-modal adversarial attack. In these cases, an adversary could bombard an AI application that’s already in production with manipulated data to trick the system into misclassifying inputs. This can, of course, happen unintentionally, too, hence why it’s sometimes said that generative AI is getting “dumber.”

In any case, the result is that models that are trained and/or retrained by bad data inevitably end up degrading over time — a concept known as AI model drift. Multimodal AI systems only exacerbate this problem due to the added risk of inconsistencies between different data types. That’s why red teaming is essential for detecting vulnerabilities in the way different modalities interact with one another, both during the training and inference phases.

Red teams can also detect vulnerabilities in security protocols and how they’re applied across modalities. Different types of data require different security protocols, but they must be aligned to prevent gaps from forming. Consider, for example, an authentication system that lets users verify themselves either with voice or facial recognition. Let’s imagine that the voice verification element lacks sufficient anti-spoofing measures. Chances are, the attacker will target the less secure modality.

Multimodal AI systems used in surveillance and access control systems are also subject to data synchronization risks. Such a system might use video and audio data to detect suspicious activity in real-time by matching lip movements captured on video to a spoken passphrase or name. If an attacker were to tamper with the feeds, resulting in a slight delay between the two, they could mislead the system using pre-recorded video or audio to gain unauthorized access.

Getting started with multimodal AI red teaming

While it’s admittedly still early days for attacks targeting multimodal AI applications, it always pays to take a proactive stance.

As next-generation AI applications become deeply ingrained in routine business workflows and even security systems themselves, red teaming doesn’t just bring peace of mind — it can uncover vulnerabilities that will almost certainly go unnoticed by conventional, reactive security systems.

Multimodal AI applications present a new frontier for red teaming, and organizations need their expertise to ensure they learn about the vulnerabilities before their adversaries do.

The post Stress-testing multimodal AI applications is a new frontier for red teams appeared first on Security Intelligence.