The DSPM promise vs the enterprise reality

The data sprawl problem is worse than anyone admits

Before a DSPM tool can protect data, it must find it. That sounds straightforward. In practice, it is the first place most programs quietly begin to unravel.

Enterprises have been operating in hybrid and multi-cloud environments for a long time. Data has followed every workflow — into Salesforce, into SharePoint, into dozens of S3 buckets that were created by developers who have since moved on, and into collaboration tools adopted during the pandemic without any formal data classification policy attached. Nobody tracked it systematically. Research from Cyera’s 2024 DSPM Adoption Report found that 90% of the world’s data was created in just the last two years, and total data volume by 2025 reached 181 zettabytes. Security teams are being asked to govern a landscape that is growing faster than any tool or team was designed to handle.

When DSPM scanners go to work on a large enterprise environment, the volume of findings almost always exceeds initial expectations — sometimes by an order of magnitude. One organization I worked with discovered sensitive customer PII in seventeen cloud storage locations that they had no formal record of. Another found regulated financial data sitting in a collaboration workspace that had been shared with an external contractor two years prior and never revoked.

The visibility is genuinely valuable. But, as Wiz notes in their DSPM framework, visibility without remediation capacity is just a longer list of things that can go wrong. And that is exactly where the first real friction begins.

Ownership is a political problem, not a technical one

DSPM tools are exceptionally good at identifying data risk. They are not designed to resolve the organizational question of who is responsible for fixing it. That question, in most enterprises, does not have a clean answer.

Security teams surface the finding. The data sits in a business unit’s environment. The IT team may own the cloud account, but the data owner is in Finance, HR, or a product team operating on a separate roadmap and budget cycle. When the DSPM platform generates a remediation ticket, the question of who closes it — and who gets measured on closing it — is rarely answered in advance.

This creates what I call the remediation gap. Findings accumulate. Risk scores rise. But nothing gets fixed, because no single team has both the authority and the incentive to fix it. Security points at the business. The business points at IT. IT points at the data owner. The data owner has a product launch in six weeks and no security budget. Forcepoint’s DSPM implementation research confirms this pattern: Even capable platforms underdeliver when rollout turns into a scanning project with unclear ownership and remediation that lives in a permanently deferred backlog.

I have watched this dynamic play out in organizations across industries. It is not a technology failure. It is a governance failure — and no DSPM platform in the market today ships with a solution to it. That solution must be built by leadership, before deployment, with teeth. That means defined data ownership models, escalation paths and accountability metrics that connect to performance conversations, not just security dashboards.

Classification debt is real, and it goes well with compounding

Every DSPM implementation depends on one foundational input: A coherent data classification framework. Most enterprises do not have one that is current, enforced, or agreed upon across business units.

Organizations are equipped with policy documents written five years ago, and what was defined there, nobody uses consistently. What adds more is a growing volume of unstructured content that was never classified at all. According to a 2024 industry survey cited by Securiti, 83% of IT and cybersecurity leaders assert that lack of visibility into data contributes significantly to their weak security posture — a figure that points directly at the classification gap sitting underneath most programs.

DSPM tools apply machine learning to infer sensitivity from data patterns — and they are increasingly good at it. But inference is not a substitute for intentional classification. False positives create noise. False negatives create blind spots. Both erode trust in the platform over time. And once analysts stop trusting the findings, the program stalls regardless of how sophisticated the tooling is.

The harder truth is that many organizations use the DSPM project as a forcing function to finally build the classification framework they should have built years ago. That is not inherently wrong. But it dramatically expands the scope and timeline, and it requires business stakeholder engagement that security teams are rarely resourced to drive on their own. Executives who budget for a DSPM tool without budgeting for the classification work alongside it are setting their programs up for a slow, expensive drift toward shelfware.

Integration complexity is systematically underestimated

DSPM vendors will show you a connector library that spans AWS, Azure, GCP, Microsoft 365, Salesforce, Snowflake and a long list of other platforms. What the demo does not show you is what happens when your specific version of a legacy ERP system does not match the connector’s assumptions or when your on-premises database sits behind a network segment the cloud-native scanner cannot reach without significant architectural change.

Enterprise environments are heterogeneous by nature. Palo Alto Networks’ market analysis puts the DSPM market on a trajectory toward $2 billion by 2025, growing at rates between 25% and 37% annually — a reflection of just how aggressively organizations are investing in this space. But investment velocity and implementation maturity are not the same thing. The average large organization runs hundreds of distinct data stores across multiple cloud providers, legacy systems and third-party SaaS applications. Getting DSPM coverage across all of them is not a deployment — it is an ongoing engineering program.

Connectors break when APIs change. New data sources appear with every acquisition and product build. Maintaining coverage requires dedicated resources that are rarely factored into the initial business case. Executives should push their vendors on exactly which environments will have full coverage at go-live versus which ones are on a roadmap with no committed timeline. The distinction matters enormously because a DSPM deployment with significant coverage gaps gives a false sense of security that can be more dangerous than no deployment at all.

This is a point worth reinforcing with your procurement team: Gartner’s Market Guide for DSPM explicitly flags that organizations can no longer separate data visibility from data control — and that coverage depth, not just breadth, is the critical variable when evaluating platforms.

Alert fatigue arrives faster than expected

A fully operational DSPM deployment in a large enterprise will generate findings at a volume that most security operations teams are not built to absorb. The irony is that the better the tool works, the faster alert fatigue sets in.

Risk prioritization is the answer in theory. In practice, prioritization logic requires ongoing tuning that takes months of calibration with your specific data environment. Varonis, in their DSPM guidance for CISOs, makes the point directly: The goal should not be to generate a list of findings but to surface meaningful, actionable alerts that can be remediated — ideally with automation doing the heavy lifting. Most implementations fall well short of that standard in the early months.

In the meantime, analysts are triaging hundreds of findings per week, many of which turn out to be acceptable risks or known exceptions. Teams burn out. Findings get acknowledged and deprioritized. The board dashboard shows a healthy posture score that no longer reflects ground reality. Zscaler’s analysis of cloud data security challenges identifies this precisely: Security teams need AI and ML-powered prioritization not just to reduce noise but to help analysts focus effort on the data exposures that could realistically lead to a breach.

This is not an argument for turning off the tool. It is an argument for honest capacity planning. If your security operations team is already stretched, a DSPM deployment without additional analyst headcount or a meaningful automation investment is not going to improve your security posture. It is going to add a new category of noise to an already overloaded function.

What good looks like

None of the friction described here is insurmountable. Organizations that get DSPM right tend to share a few common attributes that have nothing to do with which vendor they chose.

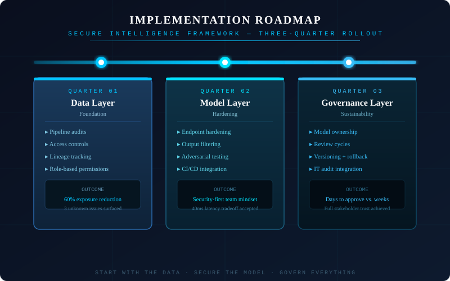

They treat DSPM as an organizational change program, not a technology deployment. They invest in governance structures before they deploy scanners. They define data ownership at the business unit level with clear accountability, and they build that accountability into how people are measured and managed. They budget for the classification work alongside the tooling. They phase their integration roadmap honestly, scope the first phase to environments where coverage will be complete, and build confidence before expanding.

They also pay attention to what Microsoft’s research on enterprise data security posture flags as the underlying imperative: Organizations must stop seeing data security as a collection of individual tools and start treating it as a holistic program anchored in measurable business outcomes. That shift in framing changes everything — from how the board conversation is structured to how remediation accountability is assigned across the business.

Most importantly, they have executive sponsorship that goes beyond signing the purchase order. The CISOs who successfully land DSPM programs are the ones who have a CFO, COO, or CEO who understands that data security risk is a business risk — and who is willing to hold business unit leaders accountable for their piece of it.

DSPM, at its best, gives your enterprise the situational awareness it needs to make informed decisions about data risk. The organizations that leverage awareness as a genuine security improvement are the ones that walk in with eyes open — prepared for the friction, staffed for the remediation work and governed for the accountability.

This article is published as part of the Foundry Expert Contributor Network.

Want to join?